Methods Used to Calibrate Devices: A Practical Guide

A practical, step-by-step guide on the methods used to calibrate devices, covering static and dynamic approaches, standards, and how to choose the right method for your equipment.

This guide helps you understand the methods used to calibrate devices, including static and dynamic approaches, reference standards, and a practical calibration workflow you can apply to most instruments.

Understanding the landscape of device calibration

Calibration is a disciplined process that ensures measurement accuracy across instruments. When we discuss the methods used to calibrate devices, we refer to systematic approaches that align an instrument’s readings with known references. In professional labs and field settings, calibrations are guided by standards, traceability, and documented procedures. The goal is to minimize bias, compensate for drift, and provide confidence that the instrument’s output matches the real world within a defined tolerance. According to Calibrate Point, effective calibration begins with a clear measurement objective, an identifiable reference, and a plan to monitor performance over time. The Calibrate Point team found that most successful calibration efforts integrate three core elements: a valid reference standard, a repeatable procedure, and a record of results that supports future audits. In practice, the exact method depends on the device type, its operating environment, and the acceptable error band. For example, a temperature sensor used in manufacturing requires a temperature-stable environment and a traceable temperature standard, whereas a torque wrench for mechanical assembly demands a controlled torque source and a calibrated set of weights. Across all devices, the chosen method should be transparent, repeatable, and auditable. By focusing on methods used to calibrate devices that suit your context, you can reduce downtime, minimize costly recalls, and extend the life of your instrument fleet. This article outlines the most common approaches and how to apply them in real work.

Static vs dynamic calibration: a quick distinction

Calibration methods fall broadly into two families: static calibration, which compares device output against fixed reference standards, and dynamic calibration, which evaluates performance under real operating conditions. Static calibration uses artifacts such as gauge blocks, calibrated weights, reference thermometers, or fixed electrical signals to anchor a measurement. Dynamic calibration, by contrast, probes the device with varying inputs or simulated operating scenarios to assess response, linearity, and drift over time. Both approaches have value, and many facilities blend them to cover accuracy across the device’s usable range. The choice hinges on the device type, the required uncertainty, and the environment. For example, a laboratory thermometer may rely heavily on static references, while a force sensor in a production line might require dynamic checks to capture real-time drift. In all cases, document the exact procedure, reference sources, and acceptance criteria so that the method remains auditable and repeatable.

Reference standards and traceability basics

A core requirement of reliable calibration is traceability. This means every measurement can be linked, through an unbroken chain of calibrations, to internationally or nationally recognized standards. Traceability is established by using reference standards that themselves have been calibrated against primary standards at accredited laboratories. In practice, this involves maintaining calibration certificates, recordkeeping, and clear acceptance criteria for each instrument. Calibrate Point analysis shows that organizations with strong traceability tend to experience fewer measurement disputes and more consistent quality over time. When selecting methods used to calibrate devices, ensure your standards carry a recognized certification, are within the calibration interval, and are calibrated in a manner compatible with the device under test. The goal is to create an auditable history that supports ongoing quality assurance and regulatory compliance.

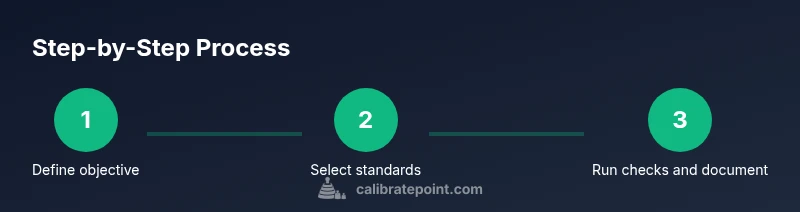

Step-by-step approach for common devices

A practical calibration workflow begins with a plan and ends with documented evidence. The following approach is applicable to many instrument families, including temperature sensors, mass gauges, pressure transducers, and electrical test gear. Start by identifying the measurement objective and the tolerance you must meet. Gather the reference standards, calibration tools, and required environmental controls. Prepare the device under test by removing contaminants, stabilizing temperature when necessary, and logging current instrument settings. Apply known references in a systematic sequence, record readings, and compare them to the references. If discrepancies exceed the acceptance criteria, adjust the device or note the offset for subsequent correction. Finally, perform a verification check using an independent standard and document the results, maintenance history, and next calibration date. Clear, repeatable steps reduce drift, improve reliability, and support regulatory audits.

Choosing the right method for your device

The selection of a calibration method depends on multiple factors: the device’s criticality, the required uncertainty, the environment, and the availability of standards. For high-precision instrumentation, you may rely more on static references with traceable certificates. For sensors operating in fluctuating conditions, dynamic checks that simulate real use may be more informative. Cost, downtime, and the risk of measurement error should influence the decision. When evaluating methods, consider traceability, documentation quality, ease of re-verification, and compatibility with your factory SOPs. A blended approach often yields the best balance between accuracy and practicality. Remember that the most appropriate method is the one you can consistently execute, document, and audit over time.

Common pitfalls and quality controls

Calibration projects fail for predictable reasons: missing references, uncalibrated environmental conditions, inconsistent procedures, or poor documentation. To avoid these, establish a clear calibration plan, verify that all standards are in spec before use, and maintain a controlled environment when required. Implement a checklist for every calibration cycle, including pre-checks, measurement steps, and post-calibration verification. Regularly train staff on the procedures to prevent drift in how tests are performed. Finally, conduct periodic audits of your calibration records to catch gaps or deviations before they become problems.

Practical examples across equipment types

Consider a handful of common instrument families and how methods used to calibrate devices apply to them. Temperature devices benefit from traceable reference baths or dry-well calibrators; pressure sensors rely on deadweight testers and calibrated pressure references; torque wrenches are calibrated against calibrated torque devices or reference weights; pH meters use standard buffer solutions and temperature compensation; and digital multimeters are checked against fixed voltage and resistance references. Each example illustrates the same underlying principles: use traceable references, document procedures, and apply consistent acceptance criteria. By adapting the same framework to diverse instruments, you can build a robust calibration program that scales with your operation.

Implementation mindset: building a calibration program that lasts

Establish a calibration program that matches your organization’s risk profile and regulatory context. Start with a written policy that defines scope, responsibilities, and intervals. Create a simple, repeatable workflow that staff can perform with confidence, and pair it with a robust recordkeeping system. Invest in training, maintain an inventory of reference standards, and schedule regular reviews to adjust procedures as devices evolve or new standards emerge. A well-structured approach to methods used to calibrate devices minimizes downtime, reduces nonconformities, and supports long-term quality improvements.

Tools & Materials

- Calibration reference standards (gauge blocks, weights, thermometers, or electrical references)(Must be traceable to a recognized standard and have current certificates)

- Target device under test(The instrument to be calibrated and its operating range)

- Environmental controls for the test area(Temperature and humidity stabilization if required by the device)

- Measurement accessories (calibrated cables, probes, adapters)(Ensure compatibility with the device and standards)

- Calibration certificates or SOP documents(Documentation to support traceability and audits)

- Calibrated weights and torque standards(Use when applicable to the device family)

- Calibration software or data logging tools(Optional but helpful for trend analysis and recordkeeping)

- Personal protective equipment and safety gear(Wear as required by the test environment)

Steps

Estimated time: 2-6 hours

- 1

Define measurement objectives

Clarify what readings must be accurate, the tolerance bands, and the required traceability. Document the device range and any environmental constraints that affect accuracy.

Tip: Write the objective in measurable terms (eg, tolerance ±0.5%). - 2

Gather standards and references

Collect all reference standards that will anchor the calibration, ensuring certificates are current and traceable to primary standards where possible.

Tip: Verify certificates before use and log expiry dates. - 3

Prepare the device and environment

Allow the device and references to acclimate to the test environment, and calibrate any tools in the same conditions.

Tip: Stabilize temperature and avoid recent recalibration of nearby equipment that could introduce contact drift. - 4

Apply known references and collect data

Sequentially apply the reference standards and record the device readings, noting any offset or nonlinear behavior.

Tip: Use a calibration log template to ensure consistency across sessions. - 5

Analyze results and adjust if needed

Compare readings to references, decide if the device requires adjustment, and follow the manufacturer guidelines to perform any offset or gain changes.

Tip: Document all adjustments with timestamp and responsible person. - 6

Verify with independent reference

Run a separate verification using a different reference to confirm the adjustment outcome and ensure no new drift was introduced.

Tip: If verification fails, reassess the procedure and repeat the checks. - 7

Document results and schedule next calibration

Record all readings, conclusions, and the next due date in the calibration management system or log.

Tip: Establish a repeatable cadence aligned with usage and risk.

Questions & Answers

What are common calibration methods used across instruments?

Common methods include static calibration with fixed references, dynamic calibration that tests performance under real conditions, and documentation based on traceability. The best approach often blends methods to cover the instrument's operating range.

Common methods include static references for fixed checks, dynamic checks for real use, and keeping good records to show traceability.

How do I choose a calibration method for a device?

Consider the required accuracy, the device environment, cost, and the availability of traceable standards. Align with your SOPs and regulatory requirements to select the most effective method.

Choose based on accuracy needs, environment, and available standards, following your standard operating procedures.

How often should calibration be performed?

Frequency depends on device usage, criticality, and manufacturer guidance. High-risk instruments may require more frequent checks, while low-risk tools can follow longer intervals.

Frequency depends on risk, usage, and guidance from the manufacturer.

Can I calibrate without standards?

Standards provide traceability and confidence. You can establish baselines, but without recognized references, the calibration carries less auditable weight.

You can baseline with internal references, but ensure some external standard is used for traceability.

Why is calibration documentation important?

Documentation creates auditable history, supports regulatory compliance, and helps schedule future calibrations based on observed drift and usage patterns.

Documentation is essential for audits and scheduling future calibrations.

Watch Video

Key Takeaways

- Start with clear objectives and traceable references

- Choose static, dynamic, or blended methods based on device and environment

- Document every step for audits and continuous improvement

- Verify results with independent references to confirm accuracy

- Schedule regular recalibrations to prevent drift