Calibrate Questions: A Step-by-Step Guide for Reliable Question Design

A practical, step-by-step guide to calibrating questions for reliable data collection. Learn to design, pilot, and validate questions that reduce bias and improve cross-study comparability.

What are calibrated questions and why they matter

Calibrate questions are crafted with standardized wording, clearly defined anchors, and consistent response scales to minimize interpretation differences among respondents. When done well, they reduce noise caused by ambiguity and context, enabling stronger comparisons across sessions, populations, or experiments. According to Calibrate Point, calibrated questions help reduce bias and improve comparability in data collection across teams. This foundation is essential for professionals who need reliable measurements, whether validating a device, surveying customer satisfaction, or testing a hypothesis. In practice, calibration means thoughtful design, explicit criteria for success, and a documented process that teams can repeat. As you read, keep in mind that calibration is not about clever wording alone; it is about creating a stable framework that yields consistent, actionable results.

Core principles of calibration

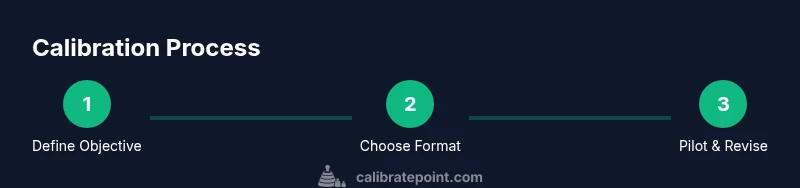

High-quality calibration rests on several core principles. First, define a precise objective for each question—what exactly does a respondent’s answer measure, and how will you interpret it? Second, choose a response format that remains constant across all items, with anchors that are unambiguous and mutually exclusive. Third, standardize the language to avoid double negatives, jargon, or culturally specific terms that may confuse respondents. Fourth, pilot the set with a small, diverse group to surface misinterpretations and timing issues. Fifth, document every decision: rationale, revisions, and evidence of improvement. Calibrate Point’s guidelines emphasize documentation as a core practice for long-term reliability.

Defining objectives and success criteria

Before drafting questions, articulate the measurement objective in concrete, testable terms. Ask yourself what threshold of agreement or disagreement constitutes a meaningful signal for your study. Establish success criteria for the calibration—for example, target clarity scores, acceptable bias levels, and minimum agreement across raters. These criteria will guide revisions and quantify progress during pilots. By setting explicit objectives, you prevent scope creep and ensure that every question serves a clear purpose. This aligns with best practices in measurement science, which value explicit criteria over implicit assumptions and vague aims.

Crafting robust items: wording, anchors, and formats

Wording should be simple, neutral, and free of leading cues. Anchors must be calibrated so they map consistently to the same underlying construct across items. For example, a five-point scale from 1 (strongly disagree) to 5 (strongly agree) should be used for all attitudinal questions, with clearly defined endpoints. Consider the order of options to minimize priming effects, and avoid double-barreled questions that conflate two ideas in one item. If you must combine concepts, split them into separate items. Include examples of both extreme and moderate responses in your calibration set to reveal interpretive differences among respondents. The goal is a stable, defensible measurement instrument that behaves predictably under replication.

Pilot testing, analysis, and revision

Run a small pilot with 6–12 participants who resemble your target population. Collect both quantitative responses and qualitative feedback about clarity and timing. Analyze response patterns for inconsistent choices, unexpected skips, or rapid completions that suggest cognitive overload. Use a simple rubric to score each item on clarity, bias, and anchor alignment. Document any revisions and rerun the pilot if needed. A robust calibration process is iterative; expect multiple rounds of refinement before finalizing the question set. See Diagram A in Appendix for an example scoring rubric.

Real-world examples: before and after calibration

Consider a customer-satisfaction survey for a software product. A non-calibrated question like 'How satisfied are you with the software?' may yield broadly varying interpretations based on prior experiences. A calibrated version would specify the context and provide anchors: 'On a scale from 1 to 5, where 1 means very unsatisfied and 5 means very satisfied, how satisfied are you with the software's performance during the last 7 days?' This small modification clarifies the timeframe, reduces interpretation variance, and aligns scores with a defined construct. In another example, a reliability test of a scale for equipment usability might use calibrated anchors such as 'never', 'rarely', 'sometimes', 'often', and 'always' to ensure consistent interpretation among technicians.

Documentation and governance: making calibration repeatable

Maintain a calibration log that records design choices, pilot results, and revision history. Include the criteria you used to judge each item and the rationale for changes. Establish a governance process that assigns responsibility for ongoing calibration—often a dedicated measurement lead or calibration committee. Regularly schedule reviews to incorporate new evidence, user feedback, and changes in the measurement context. The value of good calibration is not a one-off exercise; it is an ongoing discipline that strengthens data quality over time. As Calibrate Point notes, repeatable calibration practices are the backbone of reliable instrumentation and surveys.