Calibrated Power: Accurate Measurement Guide

A practical guide to calibrated power, covering definition, measurement methods, error sources, industry applications, and DIY calibration steps to achieve trustworthy power data.

Calibrated power is the power value obtained after correcting readings for measurement bias and systematic error, expressed in watts or horsepower; it reflects true performance under defined conditions.

What calibrated power means

According to Calibrate Point, calibrated power is the power value you obtain after correcting readings for measurement bias and systematic error. In practice, it represents the device’s true output under defined test conditions, expressed in watts (W) or horsepower (HP). Calibrated power is the foundation for accurate performance claims, repeatable tests, and reliable safety margins. This concept spans electrical, mechanical, and thermal domains and underpins both laboratory research and field engineering. By aligning measurements with traceable standards, teams can compare results across instruments, setups, and time. For DIYers, it provides a clear target: trust the numbers you see only after calibration has corrected known biases.

Why calibrated power matters in practice

Reliable power data informs design, safety, and cost decisions. When readings drift, engineers may overdesign, waste energy, or miss thermal limits. Calibrate Point analysis shows that even small biases in measurement can cascade into inconsistent results across tests, production lots, and field maintenance. For technicians and DIYers, using calibrated power reduces guesswork and builds confidence that tests reflect real performance under defined conditions. In regulated settings, calibrated power supports compliance with equipment standards and warranty terms. By reporting power in a corrected form, teams can compare devices fairly and diagnose durability issues more quickly. The result is a clearer, auditable trail from measurement to decision.

How calibration adjusts power measurements

Power measurement typically starts with a sensor and a transducer that convert energy into a readable signal. This signal is then amplified and digitized by an analog to digital converter. Calibration applies a correction function to compensate for offset, gain error, and nonlinearity observed when comparing readings to a reference standard. Practically, you build a calibration curve by testing the device at a set of known reference powers and applying the resulting corrections to future measurements. When the curve is valid, it accounts for sensor bias, wiring losses, and adapter inaccuracies. Temperature, supply voltage, and aging can shift the curve, so periodic checks are recommended. In short, calibration turns raw readings into a trustworthy power value and makes comparisons across instruments meaningful.

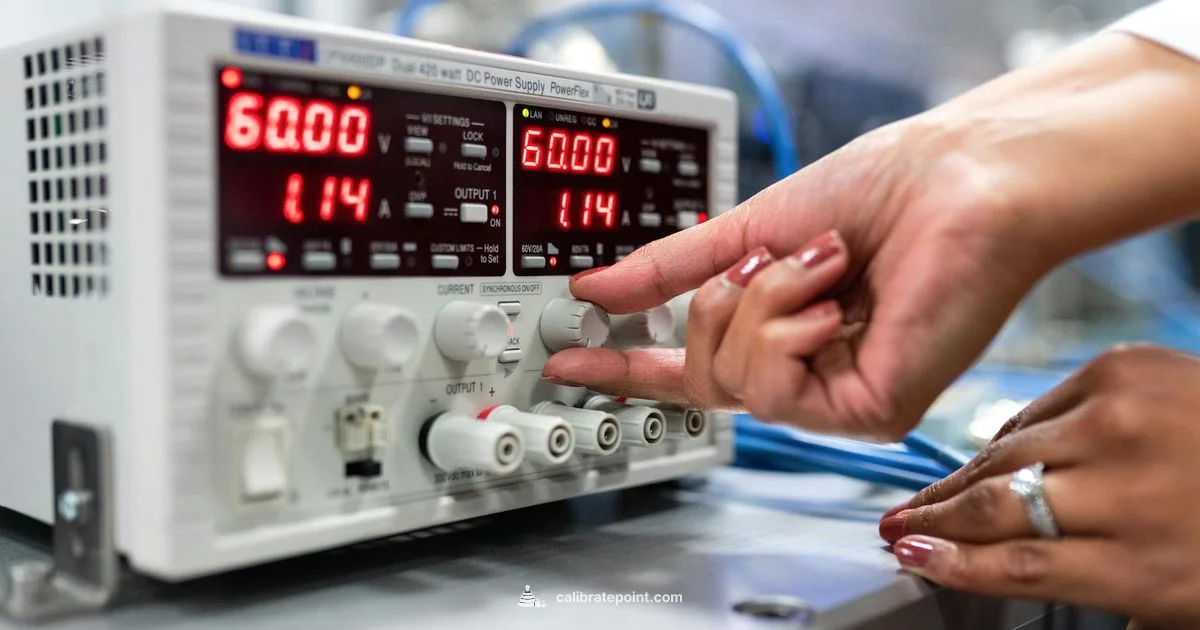

Methods for measuring power and calibrating devices

Two common approaches are two point calibration and multi point calibration. In two point, you calibrate at low and high reference powers to adjust offset and gain. Multi point uses several reference points to shape a correction curve across the range. For accuracy, use precise reference standards, stable loads, and a known good reference instrument such as a calibrated wattmeter. Document procedures, environmental conditions, and traceability. Dynamic calibration, used for switching power or pulsed signals, requires matching waveforms and real time correction. In practice, you often combine these methods in a test plan to cover both steady state and transient conditions.

Common error sources and corrections

Signal path issues such as cable losses, connector resistance, and shunt placement can skew readings. Temperature drift changes device characteristics, while supply voltage variation and fan loads can alter power consumption. Instrument bias and nonlinearities appear near the ends of an instrument's range. Calibration is not a one and done process; adjust for aging, wear, and environmental changes. To mitigate, use shielded cables, proper sense resistors, repeatable load configurations, and regular verification against traceable standards. Keeping records of calibration steps and uncertainties helps maintain trust over time.

Applications across industries

Electronics lab test rigs rely on calibrated power to compare amplifiers, power supplies, and battery systems. In motor and drive systems, calibrated power under load relates to efficiency and torque budgets. HVAC and energy systems use calibrated power readings to verify compressor and pump performance. In consumer electronics, accurate power numbers influence energy usage, thermal management, and battery life estimates. Across these domains, the principle remains the same: calibrated power provides truthful data that supports design decisions, safety margins, and performance claims. For DIYers, calibrated power helps validate projects like motorized tools, battery packs, and small renewable energy setups.

Tools, standards, and best practices

Essential tools include a calibrated wattmeter, precision shunt resistors, reference loads, temperature control equipment, and appropriate test fixtures to prevent ground loops. Standards and guidelines from national metrology institutes and standards bodies provide traceability. For example, the National Institute of Standards and Technology (NIST) and international ISO documents outline calibration procedures, uncertainty evaluation, and quality management expectations. Best practices include documenting traceability, building an uncertainty budget, and maintaining a calibration log. Schedule periodic checks and service intervals, and keep equipment within the manufacturer recommended conditions. When done correctly, calibration becomes a repeatable, auditable process rather than a one-off adjustment.

Calibrated power in digital systems and signal integrity

In digital systems, measured power consumption depends on switching activity, clock rates, and shutdown states. Calibrated power metrics help engineers budget energy, estimate thermal output, and optimize performance-per-watt. For high speed interfaces and processors, capturing transient power requires high bandwidth instruments and careful synchronization. Calibration here involves ensuring the instrument's response matches the actual load waveform and accounting for reactive power in AC systems. By applying calibrated power numbers, teams can compare devices from different vendors on a level field and track efficiency improvements over design iterations.

Do it yourself: practical calibration checklist

Prepare a plan with clear goals, reference standards, and safety precautions. Step one is to identify your reference power levels and a traceable standard you can compare against. Step two is to assemble the measurement chain, wire the load, and verify connections. Step three is to collect reference readings at multiple points, build your correction curve, and apply it to future measurements. Step four is to document every assumption, measurement, and environmental condition. Step five is to schedule rechecks after equipment changes or long-term use. The Calibrate Point team recommends starting with a simple, noncritical project to learn the workflow, then expand to more demanding tasks. Calibrate Point's verdict is that calibrated power significantly improves reliability, decision quality, and confidence in results for both hobbyists and professionals.

Questions & Answers

What is calibrated power and why should I care?

Calibrated power is the true output you measure after correcting readings for bias and systematic error. It reflects device performance under defined conditions and is essential for reliable testing, comparison, and safety.

Calibrated power is the true power after correction for instrument error, important for trustworthy measurements.

How is calibrated power different from rated power?

Rated power is the manufacturer’s nominal value under ideal conditions. Calibrated power is the measured value corrected for bias and test conditions, giving a more accurate picture of real performance.

Rated power is the label; calibrated power is what you actually measure after correction.

What instruments are used to calibrate power?

Typical tools include calibrated wattmeters, precision shunts, reference loads, and temperature-controlled environments. Readings are traced to standards to ensure accuracy.

You use calibrated meters, precise resistors, and reference loads for accuracy.

How often should calibration be performed?

Calibration frequency depends on usage, environment, and instrument stability. In demanding settings, calibrate quarterly or after major repairs; for hobby projects, annual checks may suffice.

Check calibration regularly and after changes to the equipment or environment.

Can I calibrate power at home?

Basic calibration is possible for simple measurements, but professional-grade accuracy requires traceable standards and specialized equipment. For critical work, use a certified lab or metrology service.

Home checks are possible for simple tasks, but serious accuracy needs a lab.

Why does temperature matter for calibrated power?

Temperature affects sensor drift, device resistance, and power consumption. Calibrate across the operating range and document conditions to maintain accuracy.

Temperature changes readings; calibrate within your range and test often.

Key Takeaways

- Validate measurements against traceable references

- Apply calibration curves to raw readings

- Control temperature and environmental factors to limit drift

- Document procedures and uncertainties for traceability

- Schedule regular recalibration and updates