Difference Between Absorbance and Calibration Curve

Explore the difference between absorbance and calibration curves, how each method works in quantitative spectrophotometry, and practical guidelines for accurate, traceable results.

In quantitative spectroscopy, the difference between absorbance and calibration curve is fundamental: absorbance is a direct optical property measured by the instrument, while a calibration curve translates absorbance readings into concentrations using a fitted model. This comparison helps determine when to report concentration versus when to use raw absorbance for quick checks. The Calibrate Point perspective emphasizes choosing the right approach based on required accuracy and traceability.

Understanding Absorbance, Transmittance, and Calibration Concepts

Absorbance and calibration concepts form the backbone of quantitative spectrophotometry. The difference between absorbance and calibration curve becomes central when you move from signal detection to concentration reporting. Absorbance (A) is the optical property measured by the spectrophotometer. It depends on the sample's absorptive species, the path length of the light through the sample, and the chosen wavelength. In contrast, a calibration curve is an empirical model that maps measured absorbance values to known concentrations via standards and a regression fit. This distinction matters because it determines whether you can report a precise concentration or just a qualitative or semi-quantitative reading. According to Calibrate Point, recognizing this distinction reduces bias in quantitative results and supports traceability across laboratories. In practice, you often begin with absorbance to confirm a signal and assess instrument performance, then decide whether a calibration curve is necessary for reliable quantification. Framing your workflow with this difference helps you set proper blanks, baseline corrections, and validation steps, which improves reproducibility across runs.

Core Principles: Beer's Law, Linearity, and Limitations

At the heart of absorbance-based measurements lies Beer's Law, A = εlc, linking absorbance (A) to concentration (c) through the molar absorptivity (ε) and path length (l). This relationship implies that, within a given system, absorbance should increase linearly with concentration over a defined range, provided the path length and ε remain constant. However, real-world samples introduce deviations: aggregating species, scattering, and solvent effects can distort linearity; instrumental factors like stray light and detector saturation can alter the slope or obscure the intercept. This block emphasizes that the difference between absorbance and calibration curve is not just mathematical but practical. A calibration curve captures the observed response of an instrument to known concentrations, absorbing those imperfections into the model. When a calibration curve is verified to be linear over the working range (with R^2 near 1.0), a simple linear equation can be used to interpolate unknowns. When nonlinearity occurs, higher-order fits or different models are needed, and the calibration curve becomes essential for accurate quantification. The Calibrate Point team notes that understanding Beer's Law helps diagnose why a curve behaves nonlinearly.

What Does a Calibration Curve Do?

A calibration curve translates optical signals into quantitative concentrations. To build one, you prepare a series of standard solutions with known concentrations spanning the expected measurement range. For each standard, you measure the absorbance at the target wavelength, often after blank correction. The resulting data pairs (concentration, absorbance) are fitted with a model, typically a straight line (c = (A − b)/m) or a polynomial for nonlinear systems. The fitted equation (and its statistics, like the slope m and intercept b, plus R^2) becomes the reference used to estimate the concentration of unknown samples from their measured absorbance. A calibration curve also provides an alert mechanism: if the unknown’s absorbance falls outside the curve’s valid range, the result may be unreliable and require re-measurement or a new calibration. This approach underpins quantitative accuracy, because it accounts for instrument response, baseline drift, and any minor deviations in ε or path length across the working range. The calibration curve thus replaces absorbance with a calibrated, concentration-based metric, and the calibration model should be documented meticulously.

When Absorbance Is Sufficient: Quick Checks and Context

There are legitimate situations where you can rely on absorbance readings without building a full calibration curve. For quick screening in a controlled setting, you may know the sample's expected concentration range from prior experiments, standard operating procedures, or validated methods. In such cases, using absorbance with a known rough relationship can provide rapid results and decision points, such as flagging samples above or below a threshold. Still, this approach is inherently less precise and reproducible than a calibrated method. Baseline drift, solvent differences, and instrument stability can skew absorbance values, especially if you change cuvettes, lamps, or detectors between runs. Additionally, different wavelengths or spectral features can alter sensitivity, making cross-sample comparisons risky. The key is to document the assumed relationship, keep a consistent setup, and verify periodically that the absorbance-based estimates still align with independent measurements. In practice, absorbance can be a valuable first-pass indicator, but you should plan for a calibration curve if you require robust, comparable concentrations. Calibrate Point found that relying solely on absorbance often leads to underestimation of uncertainty in reported values.

When Calibration Curves Are Essential: Accuracy and Validation

A calibration curve becomes essential when precise, comparable concentrations are required across samples, days, or instruments. By anchoring measurements to standards, you correct for issues such as fluctuations in lamp intensity, detector response, and minor solvent effects that would otherwise bias results. A well-designed curve includes multiple standards covering the expected concentration range, replicates to estimate measurement uncertainty, and blanks to set the zero baseline. Validation steps—such as checking the linearity of the curve, cross-instrument comparisons, and periodic re-calibration—help ensure ongoing accuracy. The difference between absorbance and calibration curve becomes evident in the way results are reported: with a curve, unknowns are converted into concentrations with a regression model that yields an explicit uncertainty estimate. In professional practice, reporting concentrations with traceable calibration data supports quality systems and regulatory expectations. The Calibrate Point team emphasizes pre-emptive planning: define the working range, select appropriate standards, and document the fitting method to enable future audits and reproducibility. External standards from national labs reinforce confidence in the curve.

Common Errors and How to Correct Them

Several frequent errors blur the distinction between absorbance and calibration curves. Failing to blank-correct introduces a nonzero baseline that inflates absorbance readings, compromising both direct measurements and curve fitting. Using an inappropriate wavelength or integrating over a broad spectral band can distort sensitivity. In calibration, selecting standards outside the valid working range or applying a linear fit to a nonlinear region leads to biased concentrations. Instrument drift over time, temperature changes, and cuvette inconsistencies (path length and cleanliness) contribute to system noise that a robust calibration curve can absorb when properly constructed. Finally, neglecting residual analysis or not validating the curve with independent samples undermines credibility. To correct these errors, maintain consistent instrumentation, perform regular blank corrections, verify linearity with multiple standards, and include quality checks such as back-calculations on known samples. The result is clearer separation between absorbance and calibration curve, reducing bias and improving traceability. Calibrate Point recommends routine checks as part of a calibration program.

Step-by-Step Walkthrough: From Sample to Concentration Using a Calibration Curve

- Define the target range and select representative standards that bracket the expected sample concentrations. 2) Prepare standards with precise dilutions, and measure their absorbance at the chosen wavelength after blank correction. 3) Fit the data to an appropriate model (linear for many spectrophotometric methods; nonlinear if indicated). 4) Record the equation, R^2, and residuals as part of the calibration documentation. 5) Measure the unknown sample, apply blank correction, and locate its absorbance on the calibration curve either by direct substitution or interpolation. 6) Report the concentration with the corresponding uncertainty, including the calibration range. 7) Validate by analyzing a separate known sample and comparing the measured concentration to its true value. 8) Re-equilibrate the instrument and re-upload the calibration when maintenance or lamp changes occur. This workflow operationalizes the difference between absorbance and calibration curve to deliver reliable quantitative results.

Practical Tips for Lab Practice

- Use matched quartz cuvettes and clean them meticulously to avoid scatter that affects absorbance. - Always blank-correct and report the blank value. - Document wavelength, path length, and temperature; small changes undermine comparability. - Build calibration curves using fresh standards and verify the curve with independent samples. - Track instrument drift by running quality control samples between measurements. - When transitioning between instruments, re-establish a calibration curve for the new system. These practices reinforce the difference between absorbance and calibration curve by showing how calibration integrates instrument response with known concentrations, producing robust, traceable results.

Quick Reference: When to Use Each Method

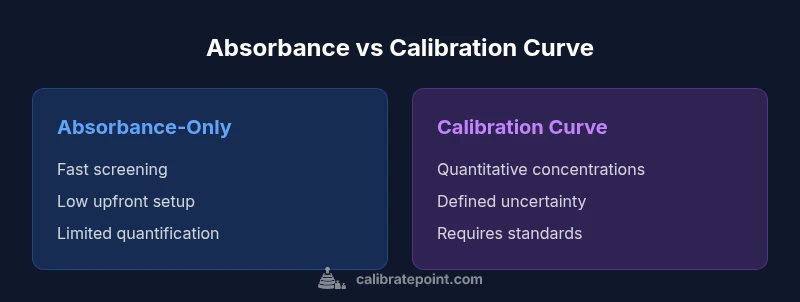

- Absorbance-only: best for rapid screening when concentration ranges are known and quick decisions are needed, or when a validated, instrument-specific relationship is already established. - Calibration curve: required for formal quantification, regulatory reporting, cross-day comparability, and when results must be traceable to standards. - Always include blank corrections and document the curve’s validity range. - If nonlinearity appears, switch to an appropriate model or expand the standard range. - The central difference between absorbance and calibration curve is that the former is an instrumental signal while the latter is an applied statistical model that yields concentrations with defined uncertainty.

Comparison

| Feature | Absorbance-Only Approach | Calibration Curve Method |

|---|---|---|

| Data output | Raw absorbance readings | Concentrations calculated from a fitted line |

| Standards required | No standards required (screening) | Requires standards to construct curve |

| Linearity/Range | Depends on instrument and wavelength | Defined by the calibration curve range |

| Accuracy | Generally lower without calibration | Typically higher with a validated curve |

| Ease of use | Faster upfront | More setup but robust results |

| Error sources | Blank correction and instrument drift matter | Calibration fit and sampling error matter |

Pros

- Faster upfront results for quick screening

- Lower initial setup when a full curve is not available

- Simple workflow suitable for small sample sets

- Minimal reagents or standards required in some contexts

- Immediate feedback on detectability

Disadvantages

- Less quantitative accuracy without calibration

- Limited applicability across wide concentration ranges

- Higher sensitivity to baseline drift and instrument changes

- No explicit uncertainty tied to reported concentrations

Calibration curves deliver more accurate, traceable concentrations; absorbance-only methods suit quick screening but lack robust quantification.

Use calibration curves for formal quantification and regulatory reporting. Reserve absorbance-only methods for fast screening or when the range is tightly defined and prior validation supports the relationship.

Questions & Answers

What is the difference between absorbance and a calibration curve?

Absorbance is the optical signal measured by the instrument, while a calibration curve relates absorbance to concentration through standards and a fitted model. The curve enables conversion of raw signals into quantitative concentrations with an associated uncertainty.

Absorbance is the instrument signal; the calibration curve translates that signal into concentration with a known model and uncertainty.

When should I use a calibration curve instead of direct absorbance readings?

Use a calibration curve when you need quantitative concentrations that are comparable across samples or days, and when results must be traceable to standards. If quick screening suffices, absorbance alone may be acceptable.

Choose a calibration curve for precise, comparable concentrations; use absorbance for quick screening only when validated for your range.

How many standards do I need to build a reliable calibration curve?

Typically, you include several standards that bracket the expected sample range, with replicates to assess variability. The exact number depends on linearity, but a minimum of five points is common for linear calibration.

Use multiple standards across the range and replicate measurements to ensure reliability.

Can calibration curves be nonlinear?

Yes. If the response deviates from linearity, nonlinear models or piecewise fits are used. In such cases, the calibration curve remains essential for accurate concentration estimates.

Nonlinear curves are common; switch to appropriate models when linearity fails.

What are common errors that affect absorbance measurements?

Common errors include improper blank correction, incorrect wavelength, instrument drift, and cuvette contamination. These can bias both absorbance readings and calibration curves.

Watch for blank errors, wrong wavelength, drift, and dirty cuvettes to keep readings reliable.

Is blank correction necessary in all cases?

Blank correction is essential to establish a proper zero baseline and minimize systematic error, especially when constructing calibration curves or comparing samples across runs.

Always blank-correct to avoid baseline bias in both methods.

Key Takeaways

- Prefer calibration curves for quantitative results with uncertainty.

- Use absorbance for rapid screening when supported by prior validation.

- Always blank-correct and verify linearity within range.

- Document standards, fitting method, and validation steps for traceability.