Calibrating an Accountability Coach: A Practical Guide

Learn how to calibrate an accountability coach program with clear metrics, templates, and practical steps. A Calibrate Point guide for DIY enthusiasts and professionals seeking reliable calibration guidance.

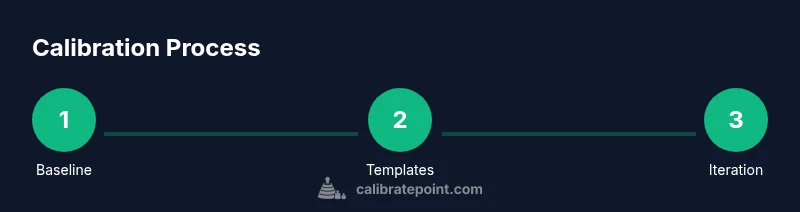

Goal: enable you to calibrate an accountability coach program so coaching outcomes align with defined goals. Key requirements include clear success metrics, a chosen coaching model, baseline data, and a plan for ongoing feedback. This guide from Calibrate Point walks you through a practical, step-by-step approach. Expect actionable templates, checklists, and illustrated examples that you can adapt to your context.

Why calibrate accountability coach matters

In any system where people are asked to deliver results, an accountability coach acts as both a navigator and a detector of drift. If you want to calibrate accountability coach practices, you start by aligning the coach's role with overarching goals and individual outcomes. Calibration ensures conversations stay focused on progress and verifiable results rather than vague intentions. It also reduces bias by stabilizing expectations through a shared framework.

According to Calibrate Point, the most effective calibration begins with a clear, shared definition of success, a baseline of current performance, and a simple mechanism for feedback. This foundation makes it possible to compare engagements with a consistent yardstick and to adjust approaches without rewiring the entire program. In practice, calibration means documenting decisions, standardizing metrics, and iterating with real-world data. By treating calibration as an ongoing discipline, teams and individuals can minimize drift and maximize accountability without sacrificing flexibility. The result is a coaching process that reliably produces visible improvements and learning opportunities that stakeholders can trust.

Define success metrics for accountability coaching

Successful calibration starts with precise definitions of what counts as progress. Distinguish between outcome metrics (what changed in results), process metrics (how the coaching interactions unfold), and learner-centered metrics (confidence, motivation, and self-efficacy). For accountability coaching, consider metrics such as task completion rate over a defined period, consistency in follow-through, quality of deliverables, and time-to-first-action after coaching sessions. In addition, track engagement signals like session attendance, response latency to feedback, and the adoption rate of agreed action plans. Use a simple rubric to rate each metric on a common scale, and ensure owners are clear on who provides the data and when it is reviewed. As you calibrate, compare current results with baselines and set progressive targets that are challenging but attainable. Include qualitative indicators—narratives from participants, perceived usefulness of coaching, and observed behavior changes—to capture nuance that numbers alone miss. Calibration thrives on balanced data and frank conversations about what works and what needs adjustment.

Choose the right coaching model and approach

Not all accountability coaching is the same. The model you choose shapes how calibration occurs and what data you collect. A 1:1 coaching model provides deep personalization and easier data attribution, while group coaching or peer-mentoring ratchets up social accountability and a broader set of examples. Hybrid approaches combine the strengths of both, with structured check-ins and shared learning circles. When calibrating, align model choice with the types of goals, the culture of the team, and the availability of time. Also consider who facilitates the calibration itself: a dedicated calibrator, the coach, or a rotating facilitator from the client side. Document the rationale for the chosen model so future calibrations can reuse the same logic. Finally, establish a default cadence for calibration reviews—monthly, quarterly, or after major milestones—so the model remains consistent over time.

Design a calibration plan with feedback loops

A robust calibration plan includes baseline assessments, ongoing data collection, and explicit feedback loops. Start with a baseline audit of current coaching practices, data sources, and decision criteria. Create simple dashboards that track key metrics, notes the questions being asked in coaching conversations, and flags areas where results diverge from targets. Build feedback loops into every cycle: after each coaching session, capture quick reflections; after a set period, run a formal review; and at major milestones, conduct a retrospective with stakeholders. Use checklists and templates to standardize data capture, ensuring that everyone reports in the same way. Calibrate Point’s approach emphasizes transparency: share dashboards with participants, invite constructive input, and document changes. As you implement, watch for data gaps or biases that could skew interpretation and address them promptly.

Practical templates and tools for accountability calibration

Templates save time and reduce cognitive load during calibration. Key templates include a) goal-setting and action plan templates, b) a coaching session rubric, c) a data collection form for post-session reflections, and d) a quarterly calibration review template. Tools include lightweight project management boards, a simple CRM for tracking participants and coaches, and a data visualization tool to illustrate trends over time. Customize templates to your context, ensuring language is inclusive and findings are actionable. Keep all templates in a shared vault with version history so teams can refer back to decisions. When selecting tools, prioritize interoperability, data privacy, and ease of use to minimize friction. Finally, pilot the templates with a small group before full rollout to surface issues early.

Implementation: a practical 8‑week calibration sprint (example)

Plan an 8‑week sprint to implement calibration in a controlled, incremental way. Week 1 establishes baselines: collect existing coaching materials, data sources, and performance signals. Week 2 defines success metrics and governance roles. Week 3 introduces templates and a simple dashboard. Week 4 runs a mini-retrospective to adjust the data collection approach. Weeks 5–6 test the full cycle: conduct coaching sessions, capture reflections, and update dashboards. Week 7 analyzes results, identifies gaps, and proposes adjustments. Week 8 finalizes the calibration plan, publishes guidelines, and trains participants on the new workflow. Throughout, maintain open channels for feedback and document every decision. This structure helps teams align expectations, accelerate learning, and avoid scope creep.

Data ethics, privacy, and trust in calibration

Accountability coaching relies on sensitive data about motivation, performance, and behavior. Establish clear privacy boundaries and explain how data will be used, stored, and shared. Obtain informed consent from participants and anonymize data when possible. Use aggregated metrics for public reporting and reserve raw data for authorized stakeholders. Build trust by communicating decisions openly, sharing insights, and inviting participant input. Calibrate Point stresses that ethical calibration is foundational: without trust, data quality deteriorates and improvements stall. Always review data governance policies before launching a calibration effort and update them as the program evolves.

Real-world case: a fictional department calibration scenario

In a mid-sized department, a consultant helps shift accountability culture by pairing a coach with small teams. Baseline interviews reveal mixed signals about accountability, and a pilot plan introduces weekly check-ins, a shared task board, and a simple rubric for progress. Over eight weeks, the department tracks completion rates, quality of outcomes, and perceived usefulness of coaching. Data shows gradual improvement in consistency and collaboration, while feedback highlights the value of clear action items and transparent decision making. The case demonstrates how a well-calibrated accountability coach can align individual effort with organizational goals and sustain momentum beyond the pilot.

Common pitfalls and how to avoid them

Be aware of common calibration pitfalls that erode effectiveness. Avoid unclear goals, inconsistent metrics, and data silos that make comparisons meaningless. Don’t rely on anecdotes alone; combine qualitative feedback with objective measures. Avoid overloading participants with too many new processes at once; phase in changes and provide training. Watch for feedback fatigue: schedule regular, short check-ins and provide simple channels for input. Finally, guard against unintended incentives that encourage gaming behaviors rather than genuine improvement. Regularly revisit the calibration plan and adjust based on evidence.

Measuring impact and sustaining calibration

Calibration is ongoing, not a one‑time event. Maintain a living set of metrics, dashboards, and templates that evolve with the program. Regularly refresh baselines, revisit governance roles, and collect ongoing qualitative feedback to capture nuance. Compare progress against targets, celebrate small wins, and scale successful practices across teams. Build a culture of learning where participants are empowered to challenge assumptions, experiment, and share lessons learned. The end goal is a reliable, transparent accountability coaching program that helps people perform better while maintaining trust and psychological safety. As Calibrate Point notes, sustainable calibration requires discipline, humility, and a willingness to adapt.

Tools & Materials

- Baseline assessment templates(Docs that capture current coaching practices, data sources, and decision criteria)

- Goal-setting and action plan templates(SMART goals and milestone templates)

- Coaching session rubric(Rubric to rate quality of coaching conversations)

- Data collection forms(Post-session reflections and outcome metrics forms)

- Dashboards / reporting templates(Simple dashboards to visualize metrics over time)

- Privacy & consent templates(Documents outlining data usage and participant consent)

- Pilot group(Small, consenting group to test calibration templates)

- Implementation playbook(Optional step-by-step guide for scaling across teams)

Steps

Estimated time: 8-12 weeks

- 1

Define baseline and scope

Identify the goals, participants, and data sources for the calibration effort. Establish what success looks like and how you’ll measure it.

Tip: Get executive sponsorship to ensure access to necessary data and decision-makers. - 2

Identify metrics and data sources

Select a balanced set of outcome, process, and learner-centered metrics. Map each metric to a data source and owner.

Tip: Document data ownership to prevent gaps during reviews. - 3

Create templates and dashboards

Develop goal-setting templates, rubrics, and a simple dashboard to visualize trends and gaps.

Tip: Keep labels consistent across metrics to avoid confusion. - 4

Pilot with a small group

Test templates and data collection with a limited group to catch issues before wider rollout.

Tip: Collect both quantitative data and qualitative feedback. - 5

Run a mini-retrospective

Review what worked, what didn’t, and what to adjust in the data collection approach.

Tip: Ask open-ended questions to surface hidden challenges. - 6

Refine the calibration model

Update templates, data definitions, and governance based on pilot results.

Tip: Document all changes for traceability. - 7

Prepare for scale

Lay out a rollout plan, training, and change-management steps for additional teams.

Tip: Pilot again in a second group to validate scalability. - 8

Launch full program

Roll out the calibrated model, monitor early indicators, and adjust as needed.

Tip: Set up a feedback loop to capture ongoing insights. - 9

Governance and accountability

Define roles, reporting lines, and escalation paths for calibration decisions.

Tip: Review governance quarterly to stay aligned with goals. - 10

Sustain and evolve

Treat calibration as a living program that evolves with new goals and data.

Tip: Celebrate wins and share learnings across teams.

Questions & Answers

What is an accountability coach?

An accountability coach helps you define goals, track progress, and stay on task through regular check-ins and structured feedback. They align actions with intended outcomes and provide support to overcome obstacles.

An accountability coach helps you set goals, monitor progress, and stay on track through regular check-ins and feedback.

How do I know calibration is working?

Calibration demonstrates progress when the defined metrics trend toward targets, qualitative feedback improves over time, and stakeholders report increased clarity and momentum in action plans.

You know calibration is working when metrics improve and people report clearer, more consistent progress.

What metrics should I track?

Track a mix of outcome metrics (results achieved), process metrics (quality of coaching interactions), and learner metrics (confidence and motivation). Balance quantitative data with qualitative insights.

Track both results and how coaching happens, plus how confident people feel.

How often should calibration occur?

Schedule regular calibration reviews—monthly or quarterly—aligned with milestones to ensure the model remains effective and relevant.

Review calibration on a regular schedule, like every month or quarter.

What are common pitfalls?

Common pitfalls include vague goals, inconsistent metrics, and data silos. Mitigate by clarifying goals, standardizing data, and maintaining open communication.

Be wary of vague goals and messy data; keep things clear and open.

Do I need a dedicated calibrator?

A dedicated calibrator helps sustain consistency, but a rotating facilitator can work in smaller contexts. The key is clear governance and documented decisions.

You can start with a rotating facilitator, but have clear governance and decisions tracked.

Watch Video

Key Takeaways

- Define clear success metrics before calibration.

- Choose a coaching model aligned to goals and context.

- Use simple dashboards to visualize progress.

- Pilot, then iterate before scaling.

- Maintain ethics and trust throughout the process.