Calibrate Job Reviews: A Practical How-To Guide for Fair, Consistent Evaluations

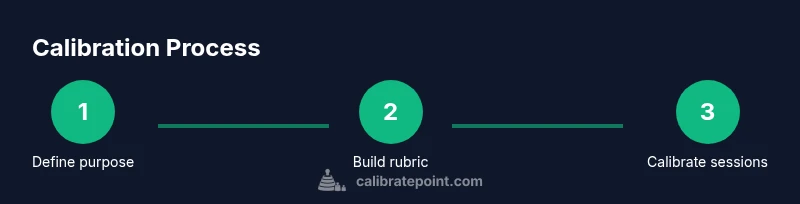

Learn a step-by-step method to calibrate job reviews, aligning ratings across teams, reducing bias, and enabling fair career development decisions. This guide covers rubrics, sampling, calibration sessions, and governance for sustainable improvements.

This guide helps you calibrate job reviews to improve consistency and fairness across teams. You’ll define a shared rubric, gather representative samples, and run calibration sessions to align ratings. By the end, you’ll have a standardized process, documented decisions, and clearer paths for development. According to Calibrate Point, transparent calibration reduces bias and strengthens talent decisions.

Why calibrate job reviews matters

Calibrating job reviews is about more than making ratings look uniform. It’s a deliberate effort to ensure that employees are evaluated using the same standards, regardless of who writes the review. When teams operate with inconsistent criteria, development plans, promotions, and even compensation can become unpredictable. A well-calibrated process improves transparency, fosters trust, and supports merit-based decisions. According to Calibrate Point, calibration helps organizations align talent decisions with strategic goals while reducing bias that might creep in through individual manager styles or departmental norms. This is especially important in matrixed organizations where multiple managers interact with a single employee across projects. By establishing shared expectations, you create a foundation for meaningful feedback, better performance conversations, and a culture that values objective evidence over subjective impressions.

For DIYers and professionals who implement calibration in real-world workplaces, the payoff isn’t theoretical. A clear rubric, backed by evidence from multiple evaluations, makes feedback actionable and trackable. You’ll be able to justify ratings, benchmark progress over time, and identify consistent gaps across teams. This section outlines how to frame your calibration effort, from governance to day-to-day practices, so you can start making reliable, equitable decisions right away.

-1

Tools & Materials

- rubric template(A standardized form listing criteria and anchors for each job level)

- anonymized review samples(A representative set of performance reviews from various teams (redacted))

- calibration meeting agenda(A clear plan with goals, time blocks, and facilitator notes)

- scoring worksheet or software(Spreadsheet or lightweight tool to capture ratings and rationales)

- policy and role descriptions(Access to job descriptions, competency models, and promotion criteria)

- notes and reference documents(Guidelines on bias, inclusion, and HR compliance)

- training materials for managers(Quick-start guides for new calibrators)

Steps

Estimated time: 4-6 hours

- 1

Define purpose and scope

Clarify why calibration is needed, which roles it covers, and the time horizon for the ratings. Establish success criteria, such as alignment of ratings across teams or improved promotion outcomes, and agree on what constitutes a fair review.

Tip: Document the scope in a one-page charter and share it with all stakeholders to avoid scope creep. - 2

Create or adapt a rubric

Develop a rubric that lists competencies, performance indicators, and anchors for each level. Ensure the language is precise and observable, with examples tied to real work situations.

Tip: Pilot the rubric with a small sample to catch ambiguous wording before broader use. - 3

Collect representative samples

Assemble reviews from diverse teams, levels, and projects to reflect the full range of performance. Include historical data if possible, but anonymize identities and sensitive details.

Tip: Aim for a sample that covers different outcomes (high, mid, and low performers) to test the rubric’s clarity. - 4

Pre-read and categorize

Before calibration sessions, have each reviewer categorize a subset of reviews using the rubric. Note where language or anchors seem unclear or biased.

Tip: Use a shared pre-read guide to reduce in-session confusion and speed up consensus. - 5

Run calibration sessions

Facilitate small-group discussions to compare ratings on the same samples. Encourage justification with concrete evidence and align on interpreted anchors.

Tip: Assign a rotating moderator to keep discussions focused and inclusive. - 6

Analyze reliability and adjust

Compute inter-rater consistency by comparing ratings across reviewers. Identify systematic drifts or biases and adjust the rubric, anchors, or training accordingly.

Tip: Document any changes and the rationale for future audits. - 7

Governance and documentation

Create a governance plan that covers who can modify rubrics, how changes are approved, and how results are recorded for auditability.

Tip: Maintain version control and publish revisions to stakeholders. - 8

Roll out training and rollout plan

Deliver manager training to embed calibration into standard reviews. Provide quick-reference tools and ongoing support channels.

Tip: Schedule follow-up coaching and refresher sessions after initial rollout. - 9

Monitor, feedback, and continuous improvement

Track outcomes like consistency, fairness, and transparency over time. Collect feedback from reviewers and employees to refine the process.

Tip: Set quarterly check-ins to review calibration performance and adapt as needed.

Questions & Answers

What is calibration in job reviews?

Calibration is the process of aligning ratings across reviewers to ensure consistent interpretation of the rubric. It involves discussing sample reviews, resolving differences, and updating the rubric or training to reduce variability.

Calibration aligns ratings across reviewers to ensure consistency in evaluations.

How long does a typical calibration take?

A single calibration session can take a few hours, depending on the size of the sample and the complexity of the rubric. You’ll also need time for prep work and follow-up adjustments.

Most teams plan a half-day for a full calibration cycle, including prep and follow-up.

Can calibration improve promotions and development decisions?

Yes. When ratings are aligned, promotion and development decisions become more transparent and fair. Calibration helps ensure that high-potential employees are identified based on consistent criteria.

Calibration helps fairer promotion decisions by using the same standards.

What if I see bias during calibration?

If bias appears, pause, re-examine the anchors, provide additional examples, and consider separate training focusing on bias awareness. Document the bias and the corrective steps taken.

If bias shows up, pause and adjust the process to address it.

Is calibration needed for all roles?

Calibration is valuable for roles with performance variability and where multiple managers assess similar work. It’s less critical for highly homogeneous roles but can still improve consistency.

It helps especially where many people rate similar work across teams.

Watch Video

Key Takeaways

- Establish a shared rubric and anchors.

- Use representative samples to test the rubric.

- Document decisions to enable audits and accountability.

- Run structured calibration sessions with a trained facilitator.

- Monitor outcomes and iterate the process for continuous improvement