Difference Between Calibration and Validation with Example

Explore the difference between calibration and validation with example. Learn definitions, workflows, and practical guidelines for labs and field work to ensure measurement accuracy and reliability.

Here is the difference between calibration and validation with example: Calibration aligns readings to a reference standard, while validation tests whether the method works on real data. For instance, you calibrate a thermometer against fixed temperature points, then validate by comparing readings to known samples in production. Together they ensure accuracy and reliability across conditions.

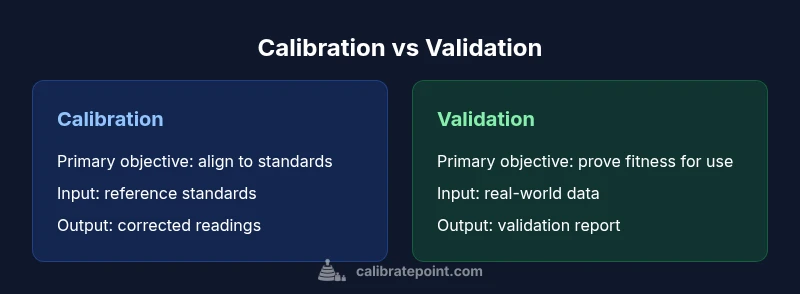

Core distinctions: calibration vs validation

At its core, the difference between calibration and validation with example rests in purpose and timing. Calibration is an adjustment process that ties instrument readings to a recognized reference so measurements are correct at the time of use. Validation, by contrast, is an assessment activity that asks: Does the entire measurement method perform adequately in the intended environment and with real samples? In many industries, both steps are required to ensure accuracy, traceability, and regulatory compliance. According to Calibrate Point, understanding these roles helps teams design robust QA plans rather than treating calibration as a one-off fix. A well-documented approach reduces drift, improves decision confidence, and provides auditable evidence of performance.

This article integrates practical guidance with clear definitions, ensuring you can plan, execute, and document calibration and validation activities effectively across manufacturing, healthcare, and lab settings. The Calibrate Point team emphasizes that a coordinated approach minimizes gaps and accelerates audits, especially when regulatory expectations increase over time.

Definitions you can rely on

Calibration is the process of adjusting a device or system to align its output with a reference standard or known true value. The goal is to reduce systematic bias so measurements reflect the true quantity as closely as possible. Validation, on the other hand, evaluates whether the measurement process as a whole yields acceptable results under real-world conditions. Validation answers if the method is fit for its intended use and whether performance meets predefined criteria.

In practice, calibration fixes the instrument, while validation confirms the method and its application. This distinction matters for risk management, regulatory compliance, and long-term reliability of measurement systems. Calibrate Point notes that many teams mistakenly perform validation without first calibrating; the opposite sequence can mask drift and produce misleading results. By separating the activities, you gain traceability and stronger evidence for decision-making.

How calibration works in practice

Calibration involves selecting reference standards that cover the instrument's operating range, recording the instrument's raw output at each standard point, and calculating adjustments to align readings with true values. Once adjustments are applied, a calibration certificate is generated, documenting the reference sources, environmental conditions, and the corrected settings. Common methods include linear or nonlinear calibration curves, affine corrections, and multivariate approaches for complex instruments. Maintaining traceability to national or international standards is critical, and all changes should be logged for future audits. Calibration is typically performed pre-use, after significant drifts, or on a scheduled interval, depending on criticality and regulatory requirements.

How validation works in practice

Validation tests the overall measurement process under conditions that mimic actual use. It requires a predefined validation plan with acceptance criteria, a representative data set, and objective metrics (such as accuracy, precision, and robustness). Validation can be prospective (before deployment) or retrospective (after deployment), and it often involves statistical analyses, hypothesis testing, and performance comparisons against a decision rule. The outcome is a validation report that documents whether the method meets specified criteria and what corrective actions are needed if it does not. Validation ensures that even with instrument drift or changing environments, the end-to-end process remains trustworthy.

Example: temperature sensor calibration and validation

Consider a temperature sensor used in a pharmaceutical setting. Calibration uses a calibrated ice-water point and a dry-wock standard to produce a reference curve; adjustments are applied so that sensor readings align with the standard at multiple points. Validation then tests the entire workflow by measuring representative samples in the actual production environment, comparing sensor outputs to an independent reference, and verifying that the process yields results within acceptable limits. This two-step approach demonstrates both the instrument's accuracy after calibration and the method's reliability in practice, providing strong evidence for quality control decisions.

Calibration workflows: pre-use and routine checks

A robust calibration workflow begins with a documented scope, including the operating range and environmental conditions. Pre-use calibration ensures accuracy before any measurement tasks. Routine checks monitor drift or bias over time and trigger recalibration if a threshold is exceeded. Key components include traceable standards, calibration certificates, data capture templates, and version-controlled adjustment records. It is crucial to maintain environmental controls and to verify that all equipment is operating within specification before and after calibration.

Validation workflows: post-production and ongoing verification

Validation workflows validate the entire measurement process, not just a single instrument. A validation plan should specify sample types, data collection procedures, acceptance criteria, and a clear decision framework. Ongoing verification involves periodic or continuous checks to confirm performance remains within limits. The validation report should summarize metrics, identify risks, and propose remediation if results fall outside acceptance criteria. In regulated industries, validation data supports compliance and helps with audits by showing that the method remains fit for purpose.

Choosing the right approach for your process

Decision factors for calibration versus validation include risk level, regulatory environment, and the intended use of results. High-risk processes with strict tolerances often require both calibration and validation, with calibration addressing measurement accuracy and validation proving process suitability. Moderate-risk environments may need periodic calibration accompanied by selective validation checks. For new methods, validation should precede routine use, while well-established methods may rely more on calibration maintenance with periodic validation to confirm continued suitability.

Documentation and records: why they matter

Documentation is the backbone of quality control. Calibration certificates provide traceability to reference standards and show the measurement system was properly adjusted. Validation reports document method performance, acceptance criteria, and evidence that the process remains fit for purpose. Calibrate Point analysis shows that thorough record keeping reduces audit findings, supports regulatory compliance, and enhances confidence in decision-making when results are challenged or reviewed by third parties.

Common mistakes to avoid

Common mistakes include treating calibration as a one-time fix, neglecting traceability, and performing validation without a clearly defined plan. Another pitfall is using non-representative reference standards or failing to document environmental conditions. Finally, teams sometimes skip updating documentation after adjustments or validation, which undermines traceability and long-term reliability.

Practical checklists you can implement today

- Define the scope, range, and environmental conditions for calibration and validation.

- Use traceable reference standards and maintain calibration certificates.

- Create a validation plan with acceptance criteria and predefined data sets.

- Document all adjustments, decisions, and results in a centralized system.

- Schedule periodic checks and retrain staff on procedures to ensure consistency.

Decision matrix: when to calibrate vs validate

When the instrument is new or has drifted beyond tolerance, calibration should be performed to correct bias. When the measurement process is used in real-world settings or after significant changes, validation should be conducted to prove fit-for-use. For high-risk processes, perform both in sequence: calibration first, then validation, and maintain ongoing verification thereafter.

Comparison

| Feature | Calibration | Validation |

|---|---|---|

| Primary objective | Align readings with reference standards to remove bias | Demonstrate that the measurement process performs acceptably in real use |

| When performed | Before use or after drift is detected; routine follow-up | When deploying a method or after process changes; ongoing checks |

| Input data | Known reference standards and traceable artifacts | Real-world samples, environmental conditions, and operational data |

| Output | Adjusted instrument readings and calibration certificate | Validation metrics, report, and evidence of fitness for purpose |

| Typical methods | Adjustment, curve fitting, and recalibration | Performance testing, hypothesis testing, and criteria-based evaluation |

| Documentation | Calibration certificates with traceability | Validation reports with criteria and conclusions |

| Best for | High-precision instruments and tight tolerances | Assessing new methods or systems under real conditions |

Pros

- Calibration reduces bias and improves measurement accuracy

- Validation provides evidence that the process is fit for purpose

- Together, they increase audit readiness and regulatory confidence

- Clear documentation supports traceability and accountability

Disadvantages

- Requires dedicated time and resources for both activities

- Can extend project timelines if not planned early

- Ongoing validation may reveal gaps necessitating redesigns

- Maintaining records across systems can be complex

Calibration and validation are complementary; use both for robust, auditable measurement systems

Calibration fixes bias by aligning readings to a standard. Validation confirms the method works in real conditions. Together, they provide reliable results and support regulatory compliance.

Questions & Answers

What is the key difference between calibration and validation?

Calibration adjusts the instrument to align with a reference standard, reducing bias. Validation assesses whether the measurement process works as intended in real-world conditions.

Calibration fixes bias by aligning readings to a standard, while validation tests the overall method in real-world use.

Can validation replace calibration?

No. Validation verifies performance, but it does not correct systematic bias in the instrument. Calibration should occur first to ensure accuracy before validation checks reliability.

Validation tests performance, but calibration fixes bias first.

When should calibration be performed?

Calibration is typically done before use, after drift, or on a scheduled basis depending on risk, regulatory requirements, and instrument criticality.

Calibrate before use or after drift, following your schedule.

What documents are produced in calibration and validation?

Calibration produces certificates showing traceability to standards; validation yields reports detailing acceptance criteria, results, and conclusions about fitness for purpose.

Calibration certificates and validation reports document performance and traceability.

Is calibration always necessary?

Not always, but most measurement systems benefit from calibration to ensure accuracy. The necessity depends on tolerance, use case, and regulatory expectations.

Calibration is often needed, depending on accuracy needs and regulations.

How do you choose between calibration and validation for a new method?

Start with a validation plan to prove fitness for purpose, then calibrate the instruments to ensure measured values are accurate and traceable.

Validate first to prove suitability, then calibrate for accuracy and traceability.

How often should validation be repeated?

Frequency depends on risk, changes in process, environmental conditions, and regulatory needs. Reassess when there are process changes or drift is detected.

Repeat validation when processes change or drift occurs.

Can you perform calibration and validation in one day?

Yes, for simple systems with straightforward procedures, but ensure sufficient time for data collection, analysis, and documentation.

You can, if procedures are simple and time is allowed for analysis and records.

Key Takeaways

- Define clear objectives for calibration and validation

- Use traceable standards and documented criteria

- Plan calibration before validation to reduce drift

- Maintain thorough records for audits and traceability

- Apply both processes to high-risk measurements