VRChat Calibrate FBT: The Complete Step-by-Step Guide

Master VRChat calibrate FBT with this practical, step-by-step guide. Learn setup, tools, tuning, and verification to achieve accurate avatar expressions across hardware and lighting variations in 2026.

You will learn how to calibrate FBT for VRChat, ensuring facial expressions map accurately to your avatar. This guide covers essential tools, setup steps, and validation checks to achieve reliable expression tracking. We’ll also discuss calibration across different hardware, software versions, and room lighting, plus how to verify results with in-game toggles and test avatars. By following the steps, you’ll minimize expression variance and improve avatar realism.

What is FBT and Why Calibrate for VRChat

VRChat supports facial bone tracking (FBT) to animate avatars in real time based on your facial movements. Calibrating FBT ensures expressions mirror your own as you speak, smile, raise eyebrows, or widen eyes. For a reliable vrchat calibrate fbt experience, you align signals from your headset or external trackers with the avatar's facial rig, account for facial geometry, and minimize drift across sessions. A well-calibrated setup reduces jitter and latency, which boosts immersion for you and others in social VR. Establish a neutral baseline expression, map core facial controls to avatar controls, and document adjustments for repeatable results. Throughout this guide, we reference the phrase vrchat calibrate fbt to anchor learning and help you search for community tips. The goal is a repeatable, practical workflow rather than perfect perfection on day one, with consistent avatar behavior across rooms, games, and lighting.

Required Tools and Environment

Before you begin, gather the essentials and prepare your environment for vrchat calibrate fbt work. You’ll need a VR headset with built-in facial tracking or a compatible external facial tracker, a computer or console capable of running VRChat, and the latest VRChat client. A reference avatar or a neutral, expression-ready avatar will help you map signals accurately. Create a quiet setup with stable lighting to reduce tracking noise, and keep a notebook or digital document to log calibration settings and observations. Optional items like a small tripod, a secondary monitor for real-time previews, or software screenshots can speed up verification. By organizing tools and space in advance, you minimize interruptions during the calibration session.

Calibration Goals and Metrics

The core goal of vrchat calibrate fbt is to translate raw tracking data into believable, responsive avatar expressions. Set clear metrics for accuracy, latency, and stability, and test across common expressions such as neutral, smile, eyebrow raise, and lips movement. Track drift by re-running a baseline test after a few minutes of use, and compare results to your initial baseline. Document tolerance levels for each facial feature and aim for consistent results across sessions rather than a single perfect capture. Calibrate with attention to head pose and jaw movements too, since alignment errors in these areas can degrade overall realism. In practice, you’ll balance sensitivity with noise rejection to avoid twitchy or delayed expressions while staying robust under varied lighting.

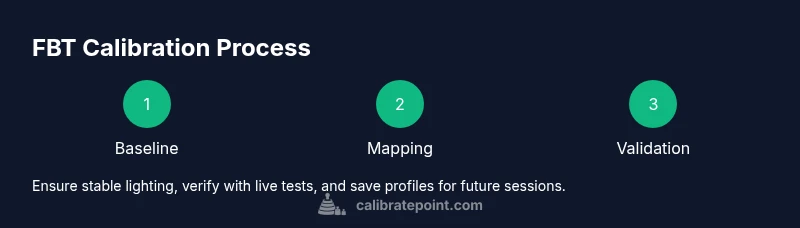

Calibration Goals and Step Overview

This section outlines the overall approach for vrchat calibrate fbt before you dive into step-by-step actions. Start with a precise baseline, then map key facial movements to avatar rig controls. Use live testing in VRChat to verify alignment and adjust iteratively. The plan includes neutral baseline capture, lip-sync calibration, brow and eye-motion calibration, and jaw/cheek mapping. Finally, you’ll validate results in different game scenes and light conditions to ensure stability across contexts. Following a structured overview helps maintain consistency, even when hardware or software versions change. Remember, the objective is repeatable results you can reproduce in future sessions.

Common Pitfalls and Troubleshooting

Even seasoned users hit common snags when vrchat calibrate fbt. Poor lighting, dramatic changes in distance from the camera, or outdated drivers can introduce noise that masquerades as expression. If results drift, revisit the neutral baseline and re-map essential controls. Inconsistent avatar rigs or mismatched firmware versions between hardware and software cause persistent misalignment. Keep logs of each calibration attempt so you can identify patterns—like specific expressions that always lag—and target those areas first. Finally, avoid cranking up sensitivity beyond reasonable limits, which often magnifies jitter instead of improving accuracy.

Validation and Verification in VRChat

With calibration configured, test in VRChat using a test avatar in multiple rooms and lighting scenarios. Watch for latency between your facial gestures and avatar response, and confirm that expressions stay stable when you rotate your head. Use in-game recording or external capture tools to review frame-by-frame alignment and confirm lip-sync and eye gaze accuracy. If you spot discrepancies, re-check control mappings and baseline data, then perform a short retest. Consistent validation through repeat sessions strengthens confidence in vrchat calibrate fbt results.

Advanced Tips for Different Setups and Lighting

Different setups require tweaks to the calibration workflow. In bright rooms, facial tracking signals may saturate briefly; consider reducing exposure or re-baselining. In dim or variable lighting, rely more on intrinsic tracking signals and caching calibration data for quicker re-runs. When using an external tracker, synchronize it with the VRChat client and verify time stamps to prevent drift. For long sessions, periodically recheck calibration at 15-30 minute intervals to catch gradual drift. Keeping a modular calibration profile allows you to switch between avatars or scenes without starting from scratch.

Tools & Materials

- VR headset with built-in FBT or compatible external facial tracker(Ensure firmware and drivers are up to date; enable facial tracking in device settings.)

- VRChat client (latest version)(Verify compatibility with your hardware and avatar rig.)

- Reference or neutral avatar(A static expression baseline helps mapping accuracy.)

- Quiet, well-lit room(Minimize background noise and lighting swings.)

- Notepad or digital log(Record baseline values and configurations for each session.)

- Secondary monitor or screen capture tool(Optional for real-time previews and documentation.)

- Software for capture (optional)(Useful for frame-by-frame review of expressions.)

Steps

Estimated time: 60-90 minutes

- 1

Prepare hardware and software

Power up your VR headset and computer. Open the VRChat client and confirm you’re on the latest build. Install any required drivers for your facial tracking hardware and ensure the FBT features are enabled in software settings.

Tip: Restart devices after enabling FBT to ensure changes take effect. - 2

Set a neutral baseline

Pose a neutral, relaxed face and capture a baseline mapping for each primary facial control (eyes, brows, mouth, jaw). This baseline anchors subsequent adjustments and reduces drift across expressions.

Tip: Keep your jaw relaxed and eyes half-open to avoid initial bias in the baseline. - 3

Calibrate lip shapes and mouth movement

Map mouth open/close, smile, and lip corner movements to your avatar’s mouth rig. Use a few clear phoneme-like positions to verify lip-sync accuracy in the avatar’s face.

Tip: Test with common phrases to reveal timing mismatches. - 4

Calibrate eyebrow and eye motion

Align brow raise, brow furrow, and eye gaze to the corresponding controls. Eye tracking can be subtle; ensure gaze direction remains natural when turning your head.

Tip: Avoid over-sensitivity that causes jitter during speech. - 5

Calibrate head pose and jaw motion

Sync head tilts and jaw rotations with avatar head pivots. Check that opening the mouth aligns with jaw hinge movement for realistic speaking.

Tip: Record a short speaking clip to compare timing with lip-sync. - 6

Run live VRChat test with test avatar

Enter a VRChat session with a test avatar to observe real-time expression mapping. Validate all expressions across sudden movements and quick facial changes.

Tip: Ask a friend to test varying expressions for quicker feedback. - 7

Fine-tune for lighting and distance

Revisit baseline and mappings if lighting or distance to the camera changes. Save incremental profiles so you can switch setups without re-calibrating from scratch.

Tip: Create separate profiles for near and far camera distances. - 8

Save, back up, and document

Export calibration data and back up profiles. Document the exact steps, hardware versions, and settings used for future reference.

Tip: Store a copy in cloud or external drive for redundancy.

Questions & Answers

What is FBT in VRChat and why is calibration important?

FBT stands for facial bone tracking, which drives avatar expressions based on your facial movements. Calibration aligns hardware signals with the avatar rig, improving accuracy, reducing lag, and preventing drift across sessions.

FBT uses facial tracking to drive expressions. Calibration aligns hardware with the avatar to improve accuracy and reduce drift.

Do I need specialized hardware to calibrate FBT in VRChat?

No specialized hardware is required beyond a VR headset with built-in facial tracking or a compatible external tracker. Compatibility with your VRChat avatar and software is essential, and keeping drivers up to date helps.

A compatible headset or external tracker is enough, but you should ensure it works with your VRChat version.

How long does VRChat FBT calibration take?

A thorough calibration typically takes 60-90 minutes, including setup, neutral baseline capture, mapping, and live testing. Time can vary with hardware diversity and room lighting.

Most calibrations take about an hour, depending on hardware and lighting.

What if expressions still look off after calibration?

Review the neutral baseline, re-map key controls, and re-test in VRChat. Sometimes slight adjustments to sensitivity or re-baselining can fix persistent mismatches.

If it looks off, re-check the baseline and mappings and test again in VRChat.

Are there privacy concerns with facial tracking in VRChat?

Facial tracking data is processed by the headset/software. Follow best practices for data privacy, keep software updated, and review any data-sharing settings in VRChat.

Privacy depends on the hardware and software; adjust settings to minimize data sharing.

Where can I find more resources on FBT calibration for VRChat?

Look for community guides in VRChat forums and officialVRChat documentation. You can also search using vrchat calibrate fbt and related calibration-methods resources.

Check VRChat communities and official docs for more guides and examples.

Watch Video

Key Takeaways

- Establish a repeatable neutral baseline.

- Map core facial controls to avatar rigs carefully.

- Validate in multiple VRChat scenes for consistency.

- Back up calibration profiles after each successful run.