Accuracy vs Calibration: Distinguishing Roles in Practice

Explore how accuracy and calibration differ, why both matter for reliable measurements, and practical steps to evaluate and apply them in real-world calibrations.

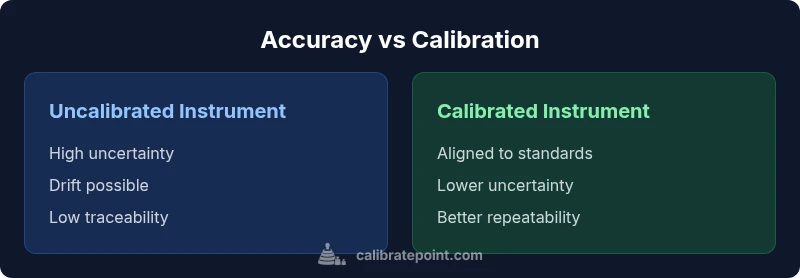

Accuracy vs calibration are related but not interchangeable. Calibration aligns a device's readings with a known standard, while accuracy describes how close those readings are to the true value. In practice, both concepts affect measurement quality, lab workflows, and field work. This comparison clarifies definitions, uses, and limitations to help DIYers and professionals choose proper calibration methods.

What accuracy vs calibration means

Accuracy vs calibration are two terms that people often use interchangeably, but they describe different aspects of measurement quality. In simple terms, accuracy is about closeness to the true value, while calibration is the process that adjusts or verifies a device against a reference standard. According to Calibrate Point, understanding this distinction helps avoid over-wrapping calibration activities around the wrong problem. In practice, you assess accuracy by comparing instrument readings to a trusted reference. Calibration, on the other hand, may involve establishing an adjustment factor, replacing a sensor, or documenting traceability to national or international standards. The result is a known reference point that reduces bias and improves repeatability. Yet, calibration does not guarantee perfect accuracy forever; environmental factors, wear, drift, and aging can cause readings to drift after calibration. Thus, teams should separate the concepts: calibration as a procedure to improve or verify measurement alignment, and accuracy as an outcome that expresses how close readings are to the truth. For DIY enthusiasts and technicians, this means diagnosing whether you face a bias to correct (calibration) or a persistent discrepancy (inherent measurement inaccuracy). By framing problems in these terms, you can select the right tools, references, and procedures to maintain measurement quality across your workflow.

Why the distinction matters in practice

In real-world workflows, confusing accuracy with calibration leads to misallocated resources and inconsistent data. For example, you might chase an adjustment when the problem is measurement bias in a sensor that should be replaced, not calibrated. Conversely, inaccurate results produced by an otherwise well-calibrated instrument underscore the difference between a fixed bias and random error. Understanding the distinction helps you set appropriate performance criteria, select appropriate references, and design validation checks that align with risk. Across labs, workshops, and field environments, accuracy reflects the quality of a measurement result, while calibration provides the traceability and bias control that make those results credible to others. The Calibrate Point team notes that a systematic approach—defining what “true value” means for each measurement context, establishing a reference standard, and documenting the calibration history—improves decision quality and audit readiness.

How calibration changes reported accuracy

Calibration can change what you report as accuracy by removing known biases or tightening the instrument's uncertainty budget. When a device is calibrated, you replace or adjust parameters so readings align with a reference standard. The reported accuracy then reflects residual errors after calibration, i.e., the combination of instrument noise, environmental effects, and the limits of the reference. In many cases, calibration reduces bias but cannot eliminate random error. The distinction remains important for uncertainty budgets used in measurement analysis and for regulatory compliance. For practical teams, calibrating tools with documented traceability allows you to claim measurement confidence to customers, auditors, and internal stakeholders. It also means you can compare performance across instruments under consistent reference conditions. As you track calibration history, you build a lineage that supports ongoing improvement and accountability.

Common mistakes and myths

- Calibration solves all accuracy problems. Reality: Calibration reduces bias within a defined range but cannot fix random error or fundamental sensor limitations.

- If it reads correctly after calibration, it will stay perfect forever. Reality: Drift, wear, and environmental change require regular checks and re-calibration.

- Calibration is optional for non-critical tasks. Reality: Even non-critical measurements benefit from traceability and documented procedures.

- Calibration is only about formal labs. Reality: Field-calibration procedures, portable references, and on-site checks are common in many industries.

Methods to assess accuracy in the field

Assessing accuracy in practical settings requires a structured approach. Start by defining what you consider the true value or reference for your specific measurement. Select a calibrated reference artifact or standard that mirrors real-world conditions. Perform a controlled comparison between the instrument under test and the reference across the measurement range of interest. Quantify the difference, then estimate the uncertainty budget by accounting for repeatability, environmental factors, and reference quality. Document results, including any observed drift and the calibration reference used. Execute repeat checks at planned intervals to monitor stability. For handheld instruments, this often means quick cross-checks against a known standard, followed by a full calibration when discrepancies exceed predefined thresholds. The goal is a defensible, auditable trace that supports decisions and quality claims.

Calibration strategies across domains

Calibration strategies vary by context, but the core principles remain stable: establish clear performance criteria, anchor measurements to traceable references, and maintain a documented calibration history. In laboratory settings, multi-point calibrations against accredited standards provide the most robust accuracy assessments. In manufacturing, in-line calibrations and perpetual monitoring often complement periodic formal calibrations. In field instrumentation, portable references and simplified check standards enable timely verification without disrupting production. Regardless of domain, choose calibration methods that align with your risk tolerance, required uncertainty, and regulatory needs. The aim is to minimize bias and quantify residual uncertainty in a transparent, repeatable way.

When to calibrate and how often

Calibration should be scheduled based on risk, usage frequency, and environmental exposure. High-risk measurements, regulatory requirements, and instruments with drift-prone components demand shorter intervals. A pragmatic approach combines a baseline calibration after installation, periodic checks during operation, and an off-cycle calibration when drift or bias exceeds predefined limits. If you cannot quantify drift confidently, implement more frequent checks. Document the entire cycle—calibration date, reference standard, method, and acceptance criteria—to enable audits and accountability. For many professionals, a risk-based calibration plan with tiered intervals, based on instrument criticality and historical performance, yields the best balance of cost, downtime, and measurement integrity.

Cost, effort, and risk management

Investing in calibration has upfront and ongoing costs, but it pays off through reduced measurement risk and avoidance of non-compliant outcomes. When planning calibration, quantify expected downtime, labor, and reference materials against the potential cost of incorrect decisions, rejected products, or safety issues. A robust calibration program includes supplier qualification, traceability of standards, documented procedures, and regular proficiency checks for staff. While some teams may defer calibration for non-critical tasks, risk-based reviews help determine where calibration investments yield the largest benefits. Remember that the goal is not perfection but predictable, well-documented performance that can be trusted by your team and clients.

Putting it together: a decision framework

A practical framework for deciding when and how to assess accuracy and calibration follows three steps. Step 1: define what is being measured and how true value is established in that context. Step 2: evaluate whether the measurement’s bias, drift, or uncertainty requires calibration, or if it can be managed with validation checks and acceptance criteria alone. Step 3: implement a calibration plan with clear intervals, reference standards, and documentation. This approach keeps calibration purposeful and avoids unnecessary downtime while ensuring measurements remain within acceptable uncertainty bounds. By applying this framework, teams can build a transparent, auditable process that supports continuous improvement.

Comparison

| Feature | Uncalibrated instrument | Calibrated instrument |

|---|---|---|

| Measurement Uncertainty | High and variable | Low and controlled |

| Traceability | Limited or none | Explicit to reference standards |

| Drift Over Time | Unpredictable drift possible | Drift minimized or tracked |

| Maintenance Downtime | Often longer due to troubleshooting | Often shorter due to routine checks |

| Best For | Exploratory tasks with low precision needs | Critical measurements requiring regulatory compliance and audits |

Pros

- Improves measurement reliability and decision confidence

- Enables traceability and audits

- Reduces long-term risk of incorrect decisions

- Supports compliance with standards

Disadvantages

- Initial calibration costs and downtime

- Requires skilled personnel to perform and interpret

- Potential for over-calibration if not needed

- Calibration drift requires ongoing maintenance

Calibrated instruments generally deliver more reliable measurements, especially for high-stakes tasks.

If your work depends on traceability and regulatory alignment, calibration should be routine. For non-critical tasks, basic validation may suffice.

Questions & Answers

What is the difference between accuracy and calibration?

Accuracy describes how close a measurement is to the true value. Calibration is a procedure that aligns readings with a reference standard and controls bias. They are related but serve different purposes in measurement quality.

Accuracy is how close you are to the true value; calibration is the process that aligns readings with a known standard.

Is calibration the same as adjusting a device?

Calibration often involves adjustment, verification, or replacement to align readings with a reference. However, calibration also includes documenting traceability and uncertainty. Adjustment is just one part of a broader calibration process.

Calibration can involve adjustment, but also verification and documentation of traceability.

How does traceability affect calibration?

Traceability links measurements to recognized standards. It enhances credibility, enables audits, and supports regulatory compliance. Without traceability, reported accuracy may be questioned.

Traceability ties measurements to standards and boosts credibility and audits.

How often should calibration be performed?

Calibration frequency depends on risk, usage, and environmental exposure. High-risk or drift-prone instruments require shorter intervals; low-risk devices may use longer intervals with periodic checks.

Set intervals based on risk and usage, and adjust as you observe drift.

Can a calibrated instrument still be inaccurate?

Yes. Calibration reduces bias within a defined range but does not eliminate all errors. Random noise and environmental factors can still affect accuracy after calibration.

Calibration reduces bias, but random errors can remain.

What is a calibration interval?

A calibration interval is the planned time between calibration checks or adjustments. It is based on risk, use, and observed drift, balancing cost with measurement integrity.

An interval is how often you calibrate based on risk and drift.

Key Takeaways

- Define measurement context before judging accuracy or calibration

- Calibration provides traceability and bias control

- Regular checks reduce drift and maintain credibility

- Balance calibration cost with risk and required uncertainty

- Document calibration history for audits and accountability