Difference Calibration and Validation: A Practical Guide

Explore the difference calibration and validation, why each matters, and how to apply them in quality systems. A practical, analytical guide that clarifies roles, workflows, and decision criteria for reliable measurement.

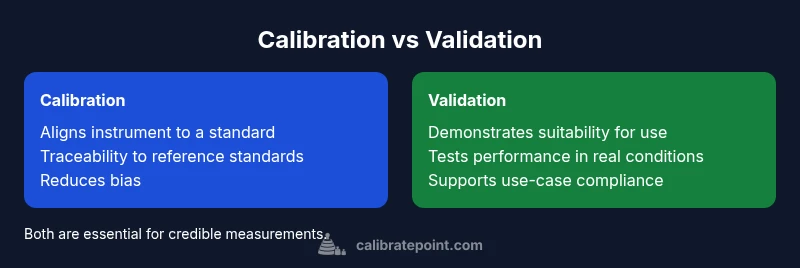

Difference calibration and validation describe two essential but distinct activities in measurement integrity. Calibration aligns instruments to a traceable standard to reduce systematic error, while validation tests whether a process or instrument meets defined use-case requirements in real-world conditions. Understanding this distinction helps teams build accurate, actionable data workflows. See the full comparison for practical decision criteria and implementation steps.

The difference calibration and validation landscape

In quality systems, the terms calibration and validation are often used interchangeably, but they denote distinct activities with different purposes. The phrase difference calibration and validation is the lens through which teams structure measurement integrity. Calibration involves aligning an instrument or sensor with a traceable reference standard to minimize systematic error. Validation, by contrast, assesses whether a process, instrument, or system as deployed meets defined use cases and performance criteria under real-world conditions. According to Calibrate Point, understanding this distinction is essential to designing an evidence-based QA workflow. This section outlines the two concepts, their roles in a measurement program, and how organizations can map them onto their existing procedures. The goal is to ensure traceability, repeatability, and confidence in data used to drive decisions. When teams talk about the difference calibration and validation, they are really discussing two halves of a whole: accuracy and applicability. Calibration focuses on the instrument; validation focuses on the process and the results it produces. In practice, calibrations are often periodic or event-driven after a drift is detected; validations occur as part of commissioning, re-qualification, or when a procedure changes.

The purpose and scope of calibration

Calibration is the ongoing process of adjusting, correcting, or verifying a measurement device so its readings align with a known reference standard. The scope includes establishing traceability to national or international standards, identifying and documenting uncertainty sources, and maintaining an audit trail for each adjustment. In practice, calibration covers a wide range of devices—from laboratory balances and temperature sensors to pressure transducers and optical instruments. The goal is to minimize bias and ensure that the instrument’s output reflects a true or accepted standard within an agreed tolerance. Calibration results should be recorded, dated, and linked to the specific instrument’s serial number, environmental conditions, and testing method. For DIY enthusiasts and professionals alike, a well-executed calibration plan reduces the risk of drift, improves repeatability, and underpins confident decision-making when data are used to drive adjustments or certifications.

The purpose and scope of validation

Validation is the evidence-based confirmation that a system, process, or product meets its intended use in real-world conditions. Unlike calibration, validation focuses on performance in application rather than alignment to a reference. Validation activities often occur during commissioning, after significant process changes, or when regulatory or user requirements change. The scope includes assessing functional performance, reliability, and robustness under expected operating conditions, as well as reviewing data integrity and traceability. Validation results support claims about capability, quality, and fitness for purpose. For professionals, validation is a check that the system not only reads correctly after calibration but also delivers trustworthy results in the context where those results matter most.

Data, evidence, and traceability in calibration vs validation

Both calibration and validation rely on data, but they emphasize different kinds of evidence. Calibration depends on reference artifacts, documented procedures, and instrument-to-standard linkage that creates traceability chains. Validation relies on performance evidence gathered through testing, sampling plans, and real-use scenarios to demonstrate compliance with acceptance criteria. Uncertainty analysis plays a role in both, but the focus shifts: calibration quantifies instrument bias and measurement uncertainty; validation demonstrates that the measurement outcome supports the intended use. Establishing robust traceability means maintaining records that connect the reference standard, the instrument, the test method, and the environmental conditions. For practitioners, this foundation is essential for audits, regulatory reviews, and long-term reliability of measurement data.

Workflow interplay: scheduling and decision points

A mature QA workflow treats calibration and validation as complementary steps in a measurement lifecycle. Scheduling calibration at defined intervals or after events like repairs helps keep instrument readings aligned with standards. Validation is triggered by changes to the process, user requirements, or new product specifications, ensuring continued suitability of the measurement system for its intended purpose. Decision points commonly hinge on drift thresholds, calibration results, and evidence that performance remains within defined criteria. Establishing a documented policy that specifies when to recalibrate, revalidate, or both is vital for consistency across teams. In practice, teams should tie calibration and validation to change control, risk assessment, and continual improvement processes.

Methods, standards, and tools

Calibration methods rely on reference artifacts such as weights, calibration solutions, or calibrated references, along with traceable procedures and uncertainty budgets. Validation methods vary by domain but often include performance tests, system suitability demonstrations, and acceptance criteria tied to real-use scenarios. Standards may come from national metrology institutes, industry consortia, or regulatory bodies. Tools include calibration software, metrological workbooks, statistical process control charts, and audit trails. For hobbyists and professionals, selecting appropriate reference standards, documenting methods, and updating procedures are foundational to credible measurement programs. Routine maintenance, environmental controls, and calibration intervals should be aligned with risk assessments and user needs to sustain long-term accuracy.

Industry examples across domains

Across laboratories, manufacturing floors, healthcare facilities, and environmental monitoring, the interplay between calibration and validation shapes reliability. In a lab, calibration ensures a spectrometer reports true values, while validation confirms that the overall analytical method produces correct results for a given sample type. In manufacturing, calibration aligns sensors with known references, and validation demonstrates that the control system maintains product quality under production conditions. In healthcare, calibration keeps diagnostic devices accurate, and validation ensures that a protocol yields safe, effective patient outcomes. These examples reveal how different sectors require tailored calibration and validation strategies while sharing core principles such as traceability, documentation, and ongoing assessment of performance.

Common pitfalls and misconceptions

A frequent misconception is treating calibration as sufficient proof of system performance. In reality, calibration only confirms that a device reads correctly against a standard, not that the overall process performs as intended under real use. Conversely, validation may be misunderstood as a one-time event; in practice, validation should be an ongoing activity as processes evolve and new requirements emerge. Other pitfalls include inconsistent documentation, failing to link results to instrument identifiers, and neglecting environmental factors that influence measurements. A robust approach requires clear definitions, commitment to traceability, and alignment between calibration results and validation evidence. When teams ignore these connections, they risk data integrity failures, regulatory non-compliance, and lost confidence in decision-making.

Implementation checklist for teams

Develop a concise policy that defines roles, responsibilities, and acceptance criteria for both calibration and validation. Create a traceability map that links reference standards to instruments and test methods. Establish calibration intervals based on risk, drift, and criticality, and implement change control for any method updates. Maintain a structured repository of records, including certificates, environmental data, and test results. Train staff to interpret results, perform root-cause analysis for drift, and execute corrective actions promptly. Finally, audit the entire workflow periodically to ensure alignment with standards and evolving use cases.

Decision framework: building a plan

A practical framework begins with a clear statement of use, followed by risk assessment and stakeholder input. Define what constitutes acceptable performance for each instrument and method, map calibration to traceable standards, and specify when validation is needed to confirm suitability. Build a decision tree that guides whether to recalibrate, revalidate, or perform both. Documented evidence, traceability, and a feedback loop that supports continuous improvement should frame every step. By designing a framework that integrates calibration and validation, teams can maintain data credibility while adapting to changing needs.

Comparison

| Feature | Calibration | Validation |

|---|---|---|

| Purpose | Align instrument readings to a reference standard | Demonstrate fitness of use for a process or system |

| Data/Evidence | Reference artifacts, uncertainty budgets, instrument data | Performance data, real-use tests, acceptance criteria |

| Stage in Process | Pre-use or ongoing maintenance for accuracy | Post-design or post-change verification of suitability |

| Frequency | Scheduled or event-driven recalibration | Triggered by changes in use, requirements, or performance drift |

| Tools/Methods | Weights, standards, reference materials, traceability documentation | Test protocols, performance tests, system suitability checks |

| Acceptance Criteria | Defined tolerance or bias limits tied to standards | Defined performance thresholds tied to intended use |

Pros

- Clarifies how measurements relate to a standard

- Enhances traceability and quality control

- Reduces drift and improves data credibility

- Supports regulatory compliance and audits

Disadvantages

- Requires time, resources, and ongoing maintenance

- Can introduce complexity if not well integrated

- May require specialized reference standards and training

Calibration and validation serve different purposes, yet both are essential for measurement credibility.

Use calibration to ensure instrument accuracy and traceability; use validation to prove performance in intended use. The two together form a robust QA framework guiding reliable decisions. The Calibrate Point team recommends integrating both steps into a disciplined workflow.

Questions & Answers

What is calibration?

Calibration is the process of aligning an instrument’s readings with a traceable reference standard to reduce systematic error. It establishes a known relationship between the instrument output and a true value, along with an uncertainty estimate.

Calibration aligns readings to a standard and reduces bias.

What is validation?

Validation demonstrates that a process, instrument, or system meets defined requirements for its intended use under actual operating conditions. It provides evidence that results are fit for purpose beyond just reading correctly.

Validation proves performance in real use.

How do calibration and validation differ in practice?

Calibration focuses on accuracy and traceability of measurements by comparing against a standard. Validation focuses on whether the entire measurement process delivers acceptable results in the intended context.

Calibration is about accuracy; validation is about applicability.

When should calibration be performed?

Calibration should be performed at scheduled intervals or after events that could affect accuracy, such as repairs, environmental changes, or suspected drift.

Calibrate on schedule or after events that affect accuracy.

Can calibration and validation be combined?

They can be coordinated within a single quality framework, but each serves a distinct purpose. Combining them is efficient when the plan clearly separates evidence for accuracy from evidence for fitness for use.

They work best when clearly separated but coordinated.

What standards and tools support these activities?

Standards come from metrology organizations and regulatory bodies; tools include reference artifacts, calibration software, and validated test protocols.

Use traceable standards and validated tools.

Key Takeaways

- Define the use case before selecting methods

- Link every calibration to a traceable standard

- Validate performance under real conditions

- Document evidence and maintain audits