Calibrate Pressure Transmitter: A Practical Guide

Learn how to calibrate a pressure transmitter with a step-by-step workflow, essential tools, safety tips, and documentation practices to ensure accurate measurements in industrial settings.

According to Calibrate Point, calibrating a pressure transmitter means applying a traceable reference pressure, recording the transmitter’s output, and adjusting zero and span to align with the reference. Start with safety checks, verify ranges, and document each step. This process improves accuracy, reduces drift, and ensures repeatable readings in routine maintenance.

Overview of pressure transmitters and calibration goals

A pressure transmitter converts physical pressure into an electrical signal, typically in a 4-20 mA loop or digital formats like MODBUS. In process control, accurate pressure measurements underpin safe control of valves, pumps, and environmental systems. Calibration is the process of aligning the transmitter’s electrical output with a traceable reference across its operating range. The goals are to minimize zero error, reduce span error, and ensure linear response from low to high pressure points. According to Calibrate Point, good calibration improves measurement integrity, reduces process upsets, and reveals sensor drift or wiring issues early. This makes calibration a recurring maintenance practice, not a one-off task. When planning, define the testing range (low, mid, high), choose a reliable reference, and set acceptance criteria. Also verify electrical connections, display readability, and loop power stability as part of your evaluation.

Safety and prerequisites

Calibration work involves pressurized systems, electrical networks, and potential leak hazards. Always follow site safety procedures, lockout-tagout (LOTO) protocols, and use appropriate PPE (chemical splash protection, eye/face protection, and gloves as needed). Confirm the calibration equipment is rated for the pressure range and gas or liquid involved. Ensure good ventilation, away from ignition sources if using flammable fluids, and establish an emergency stop plan. Before you begin, validate that the test area is free of unnecessary personnel and that all tools are grounded. These precautions protect personnel and preserve instrument integrity during calibration.

Reference standards and traceability

A trustworthy calibration relies on traceable reference standards with documented uncertainty. Use a calibrated pressure source whose accuracy is certified and traceable to a national standard. Maintain a calibration certificate for each standard and record its calibration interval. Document ambient conditions during the procedure, since temperature can influence readings. If your quality system requires, link the results to a calibration lot or batch identifier. Calibrate Point recommends maintaining an auditable trail from the reference to the transmitter output, so audits and quality reviews are straightforward.

Choosing a calibration method

Calibration methods range from simple internal zero/span adjustments to external dead-weight testers and calibrated pressure controllers. For analog 4-20 mA transmitters, you can apply a known pressure, observe the output, and adjust zero and span to align with the reference. For digital or smart transmitters, you may adjust via onboard menus or a hand-held communicator to select appropriate span, zero, and damping settings. In high-precision environments, dead-weight testers offer the lowest uncertainty, while field calibrations favor in-situ methods to minimize process disturbance. The chosen method should balance accuracy needs, equipment availability, and the risk of process disruption. Calibrate Point emphasizes planning for multiple reference points (low, mid, high) to validate linearity.

Understanding zero and span adjustments

Zero adjustment sets the transmitter’s output when the input pressure is at the lower end of the range, typically 0% of full scale. Span adjustment aligns the output slope so that the upper end corresponds to full-scale pressure. Small changes in zero can shift the entire calibration curve, while span errors affect the full-scale accuracy. Modern transmitters may expose these adjustments via front-panel knobs, software menus, or HART/FieldCommunicator interfaces. The goal is to achieve a straight-line response that matches the reference across the working range. Record the nominal zero and span values after calibration for future reference.

Pre-calibration checks and setup

Before applying pressure, inspect the transmitter, fittings, and hoses for cleanliness and integrity. Tighten fittings to avoid leaks, verify that there are no existing process conditions that could affect readings, and confirm power stability. Check that the reference pressure source is properly calibrated and warmed up if needed. Calibrate Point notes that warm-up time helps stabilize the reference and reduces drift during measurements. Document the model, serial number, and range of the device under test to ensure correct identification in records.

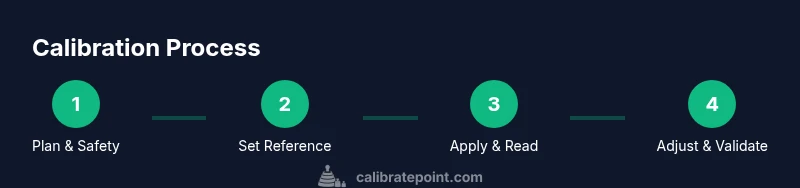

High-level calibration workflow

In many facilities, the calibration workflow includes planning, setup, data collection, adjustment, re-check, and documentation. Start by comparing the transmitter output to a known reference at multiple pressure points (low, mid, high). Use a structured approach: stabilize, measure, record, adjust, and re-measure. For process safety, ensure that any measurement activities do not interrupt essential service. The end result should be a validated curve that aligns with the reference within the preset tolerance, with all data logged for traceability.

Common pitfalls and troubleshooting

Pitfalls include applying excessive pressure beyond the device’s rating, neglecting to zero after adjustments, and failing to capture readings at multiple points. Temperature fluctuations can also skew results, especially with fragile sensors. Leaks in the test loop or improper adapter sizing can cause erroneous readings. When issues arise, re-check connection integrity, verify the reference standard’s calibration, and confirm that the transmitter is within its specified range. Calibrate Point advises documenting any deviations and corrective actions taken.

Documentation and validation

A complete calibration package includes the initial readings, applied pressures, zero and span adjustments, and final test results. Record the ambient temperature, electrical supply, equipment IDs, and the reference standard’s serial number. Attach calibration certificates and any relevant SOPs. Validation should show that the transmitter’s output matches the reference within the defined tolerance across the tested points. Store digital copies in your EAM/CMMS system and maintain a hard copy in the instrument file for audits.

Calibration cycle and performance monitoring

Set a calibration interval based on device stability, process criticality, and regulatory requirements. After calibration, monitor the transmitter’s performance over time to detect drift, hysteresis, or sudden changes due to environmental conditions or mechanical wear. Regular re-verification keeps the calibration robust and reduces unexpected process excursions. Calibrate Point recommends evaluating performance trends and adjusting the maintenance schedule to balance reliability and downtime.

Tools & Materials

- Pressure transmitter(Device to calibrate; ensure it is powered off before disconnection.)

- Traceable reference pressure source(Certified standard with known uncertainty; suitable for required pressure points.)

- Pressure regulator and regulators set(Accurate, clean regulation; use compatible fittings.)

- Calibrated pressure gauges or digital readouts(For cross-checks against the reference source.)

- Test hoses and adapters(Leak-free connections; compatible with port sizes and materials.)

- Multimeter and test leads(Electrical measurement and loop monitoring.)

- Lockout/Tagout kit(Ensure safe isolation of the test area.)

- Safety PPE(Eye protection, gloves, and any specialized gear per site policy.)

- Calibration certificates and SOPs(Documentation for traceability and compliant records.)

- Datalogging device or software(Optional for automatic data capture and trend analysis.)

- Ambient temperature gauge(Useful for documenting conditions that impact readings.)

Steps

Estimated time: 90-150 minutes

- 1

Prepare safety and scope

Confirm the calibration objective, device range, and reference standards. Verify LOTO is in effect, PPE is worn, and the test area is safe for pressure work. Have emergency procedures ready and ensure all personnel are clear.

Tip: Review the device manual to confirm acceptable calibration limits before you begin. - 2

Identify instrument and references

Record transmitter model, serial number, rated range, and current calibration status. Inspect the reference standard for calibration validity and recent certification. Note the environmental conditions that could influence measurements.

Tip: Double-check that the reference standard is within its own calibration period. - 3

Assemble calibration setup

Connect the reference source to the transmitter input using clean, compatible adapters. Ensure all connections are finger-tight, then snug to avoid leaks. Confirm that the loop power is isolated during setup.

Tip: leak-check each connection with a approved method before applying pressure. - 4

Connect measurement devices

Attach pressure gauges or digital readouts to the reference path and to the transmitter output. Verify wiring and display readings are showing correctly before proceeding.

Tip: Calibrate the measurement devices if needed and record their readings as baselines. - 5

Apply zero pressure

Set the reference to the lowest pressure point (often zero). Allow the system to stabilize, then record the transmitter output and reference reading at zero.

Tip: Use slow pressure changes to avoid overshoot on the initial reading. - 6

Record zero output

Document the transmitter’s zero output precisely. Repeat if necessary to confirm stability and repeatability.

Tip: Take multiple readings and compute the average to reduce random error. - 7

Apply span pressure points

Step through low, mid, and high pressure points. At each point, allow stabilization, then record both reference and transmitter outputs for comparison.

Tip: Use the same ramp rate for all points to maintain consistency. - 8

Adjust zero and span

If the transmitter output deviates from reference, adjust zero first, then span. Recheck each point after adjustments to confirm alignment across the range.

Tip: Make small adjustments and remeasure to avoid over-correcting. - 9

Re-check and finalize

Perform a full-range verification after adjustments. Confirm the results meet the tolerance band and document all data in the calibration record.

Tip: Seal the record with signatures from responsible personnel and attach the reference certificate.

Questions & Answers

What is the purpose of calibrating a pressure transmitter?

Calibration aligns the transmitter output with a traceable reference, reducing error and drift. It verifies accuracy across the operating range and documents performance for audits. Regular calibration helps maintain process reliability.

Calibration aligns the transmitter output with a reference to ensure accuracy and reliability. Regular checks help you maintain process control and compliance.

Can I calibrate a pressure transmitter in the field (in-situ)?

Yes, many pressure transmitters can be calibrated on-site with portable references. Ensure proper isolation and leak checks, and minimize process disruption by selecting a safe calibration window.

Yes, you can calibrate on-site if you have the right portable equipment and access to a safe window for measurements.

What references are acceptable for calibration?

Use traceable, calibrated pressure sources with documented uncertainty. The reference should be appropriate for the transmitter's range and the tolerance required by your process.

Use a traceable reference that matches your device range and the tolerance needed for your process.

How often should calibration be performed?

Frequency depends on process criticality, environmental conditions, and regulatory requirements. High-risk applications may require more frequent checks; always align with your quality program.

Calibration frequency depends on risk and regulatory needs; check your quality program for specifics.

What if readings are out of tolerance after calibration?

If readings exceed tolerance, re-check connections, confirm reference calibration, and re-perform zero/span adjustments. If unresolved, replace or service the transmitter.

If out of tolerance, re-check setup, remeasure, and if needed service or replace the transmitter.

Is warm-up time important for reference standards?

Yes. Allow the reference standard to warm up as specified by the manufacturer to stabilize readings before calibration.

Allow the reference to warm up to stabilize readings before starting calibrations.

Watch Video

Key Takeaways

- Plan calibration with traceable standards

- Record and verify at multiple pressure points

- Zero and span adjustments are critical for accuracy

- Document results for audit readiness

- Schedule regular calibration cycles