Calibrate and Scale: A Practical Side-by-Side Guide

An in-depth, data-driven comparison of calibrate and scale methods, outlining automated vs manual approaches, setup steps, and decision criteria for labs. Practical guidance to improve accuracy and traceability.

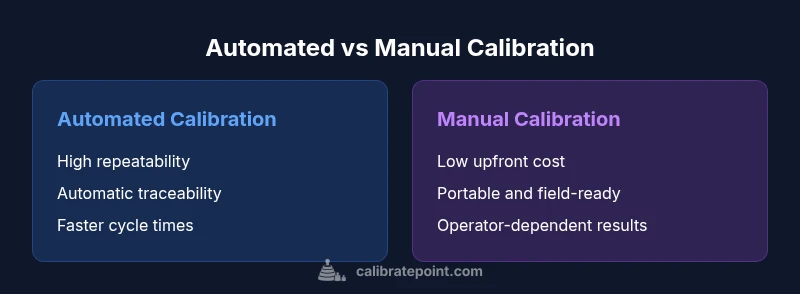

calibrate and scale should begin with an automated calibration workflow for most laboratories and workshops. Automated digital calibration offers superior repeatability, traceability, and faster cycle times, while manual calibration remains viable for field work or very small teams. The choice hinges on volume, required audit trails, and budget. To maximize reliability, define reference standards, intervals, and documentation from day one.

The fundamental idea behind calibrate and scale

calibrate and scale are foundational concepts in any measurement workflow. At its core, calibration aligns a device's output with a known reference, while scaling ensures that measurements translate accurately across ranges and contexts. When you commit to calibrate and scale as a paired discipline, you create a repeatable path from raw signals to trusted data. This section outlines how practitioners typically frame the problem, what counts as a reliable calibration event, and how to document decisions in a way that supports audits and quality systems. The approach is practical, not theoretical, and emphasizes concrete steps you can apply from day one. Across industries, consistent application of the calibrate and scale mindset reduces drift, improves confidence, and lowers the risk of measurement gaps in production, testing, or inspection tasks.

According to Calibrate Point, calibrate and scale are not one-off tasks; they are an integrated discipline that spans measurement fundamentals, process control, and quality assurance. Starting with a clear definition of what needs to be calibrated and scaled helps teams avoid scope creep and aligns responsibilities. For most labs, the first anchor is a stable reference standard and a documented schedule that specifies how often calibration should occur. From there, you can build a lifecycle plan that covers both routine calibrations and longer-term scale adjustments, ensuring every step is traceable and auditable. In this context, calibrate and scale become guardrails that protect data integrity, instrument reliability, and decision quality across audits and daily work.

Embarking on calibrate and scale means embracing a mindset that values transparency. Teams should ask: What is the reference material, what is the tolerance, and what are the acceptable sources of uncertainty? By framing the problem this way, practitioners can design calibration intervals that balance availability with confidence. A practical plan also includes change control, versioning of calibration data, and easy retrieval of historical records. In short, calibrate and scale is a discipline of discipline—consistency, documentation, and continuous verification are the levers that yield dependable measurements over time.

Comparison

| Feature | Automated calibration | Manual calibration |

|---|---|---|

| Setup complexity | Low with guided workflows | Moderate to high due to reference standards |

| Repeatability | High with automated controls | Moderate, operator dependent |

| Traceability of standards | Automatic logging and audit trails | Manual notes and certificates |

| Initial cost | Higher upfront for equipment and software | Lower upfront for basic tools and references |

| Ongoing maintenance | Software/firmware updates and calibration cycles | Tool calibration and manual records |

| Best for | High-volume, consistent tasks with strict QA | Small teams, field work, or tight budgets |

Pros

- Improved accuracy and repeatability across tasks

- Enhanced traceability and audit readiness

- Faster calibration cycles in high-volume environments

- Easier compliance with quality and regulatory standards

- Reduced operator variability and bias

Disadvantages

- Higher upfront cost and ongoing maintenance

- Requires training and change management

- Dependency on software uptime and data integrity

- Potential integration challenges with legacy systems

Automated calibration generally offers superior accuracy and traceability, with manual methods remaining viable for fieldwork or tight budgets.

Adopt automated calibration where feasible to maximize reliability and auditability. Reserve manual approaches for scenarios where portability or cost are decisive factors.

Questions & Answers

What is the difference between calibrate and scale in practice?

Calibrate refers to aligning a device’s output with a known standard, while scale ensures that measurements map correctly across a range. Together they ensure accuracy and consistency from one measurement to the next. In most workflows, you calibrate to establish a reference and scale to maintain proportional accuracy across ranges.

Calibrate aligns to a standard; scale keeps measurements accurate across ranges. Together they ensure reliable data.

Why is automation often preferred for calibration workflows?

Automation reduces operator variability, increases repeatability, and creates consistent audit trails. It accelerates routine calibrations and helps maintain strict control over uncertainty budgets.

Automation minimizes human error and speeds up regular calibrations.

How often should calibration be performed?

Calibration frequency depends on usage, stability, and required precision. High-demand environments often benefit from tighter intervals, while infrequent use may allow longer intervals with periodic checks.

Frequency depends on how hard the instrument is used and how stable it remains.

What is traceability in calibration terms?

Traceability means every calibration result can be linked to recognized reference standards and documented sources. It supports audits and demonstrates that measurements are credible and defensible.

Traceability links results to recognized standards with full documentation.

Can calibration be done in the field?

Yes, field calibration is common for portable instruments. It often relies on robust reference materials and portable standards, with procedures adapted for limited facilities and environmental variation.

Field calibration is possible with portable standards and adapted procedures.

What standards govern calibration practice in industry?

Industry practice is guided by general quality and metrology standards such as ISO 17025 and related process controls. They emphasize validation, uncertainty accounting, and traceability to national or international references.

Standards like ISO 17025 guide calibration validity and traceability.

Key Takeaways

- Automated calibration improves consistency and traceability

- Define clear reference standards and calibration intervals

- Balance upfront costs with long-term accuracy goals

- Prioritize documentation and version control

- Choose manual methods for portability or budget constraints